We prove that, under low noise assumptions, the support vector machine with $N\ll m$ random features (RFSVM) can achieve the learning rate faster than $O(1/\sqrt{m})$ on a training set with $m$ samples when an optimized feature map is used. Our work extends the previous fast rate analysis of random features method from least square loss to 0-1 loss. We also show that the reweighted feature selection method, which approximates the optimized feature map, helps improve the performance of RFSVM in experiments on a synthetic data set.

翻译:我们证明,在低噪音假设下,具有1 000万美元随机特性的辅助矢量机(RFSVM)在使用最佳地物图时,能够以1 000美元的样本获得超过1 000美元的学习率。我们的工作将以前对随机地物方法的快速速率分析从最低平方损失扩大到0-1损失。我们还表明,与优化地物图相近的再加权地物选择方法有助于改进RFSVM在合成数据集实验中的性能。

相关内容

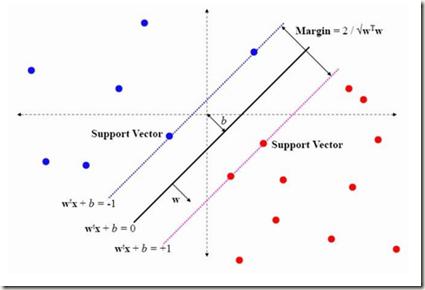

在机器学习中,支持向量机(SVM,也称为支持向量网络)是带有相关学习算法的监督学习模型,该算法分析用于分类和回归分析的数据。支持向量机(SVM)算法是一种流行的机器学习工具,可为分类和回归问题提供解决方案。给定一组训练示例,每个训练示例都标记为属于两个类别中的一个或另一个,则SVM训练算法会构建一个模型,该模型将新示例分配给一个类别或另一个类别,使其成为非概率二进制线性分类器(尽管方法存在诸如Platt缩放的问题,以便在概率分类设置中使用SVM)。SVM模型是将示例表示为空间中的点,并进行了映射,以使各个类别的示例被尽可能宽的明显间隙分开。然后,将新示例映射到相同的空间,并根据它们落入的间隙的侧面来预测属于一个类别。

专知会员服务

32+阅读 · 2020年4月26日

专知会员服务

75+阅读 · 2020年2月8日

专知会员服务

18+阅读 · 2019年10月22日

专知会员服务

30+阅读 · 2019年10月17日

Arxiv

4+阅读 · 2018年8月15日

Arxiv

11+阅读 · 2017年12月27日