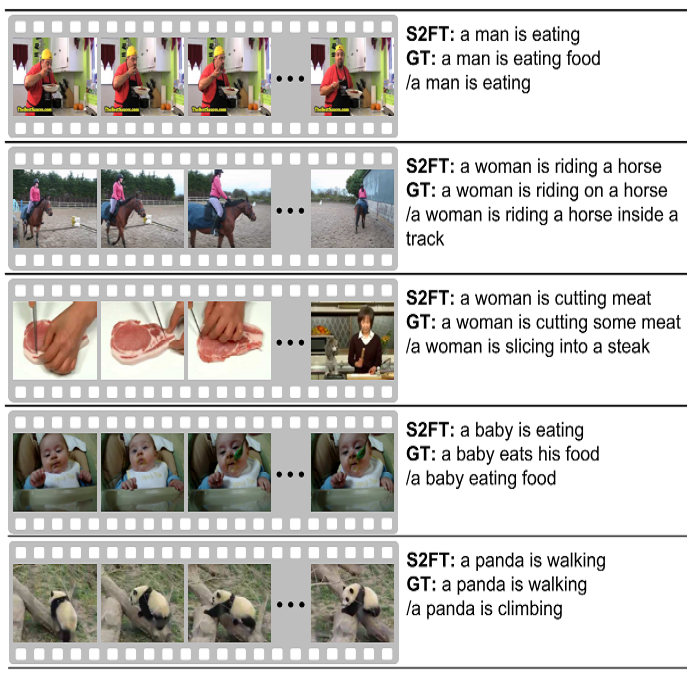

Video Captioning and Summarization have become very popular in the recent years due to advancements in Sequence Modelling, with the resurgence of Long-Short Term Memory networks (LSTMs) and introduction of Gated Recurrent Units (GRUs). Existing architectures extract spatio-temporal features using CNNs and utilize either GRUs or LSTMs to model dependencies with soft attention layers. These attention layers do help in attending to the most prominent features and improve upon the recurrent units, however, these models suffer from the inherent drawbacks of the recurrent units themselves. The introduction of the Transformer model has driven the Sequence Modelling field into a new direction. In this project, we implement a Transformer-based model for Video captioning, utilizing 3D CNN architectures like C3D and Two-stream I3D for video extraction. We also apply certain dimensionality reduction techniques so as to keep the overall size of the model within limits. We finally present our results on the MSVD and ActivityNet datasets for Single and Dense video captioning tasks respectively.

翻译:近年来,随着长期短期内存网络(LSTMs)的死灰复燃和Gated经常单元(GRUs)的引进,在序列模型的出现后,视频描述和总结近年来变得非常流行。现有的结构利用CNN提取时空特征,并利用GRUs或LSTMs以软关注层来模拟依赖关系。这些关注层确实有助于关注最突出的特征并改进经常单元,但是,这些模型受到经常性单元本身固有的缺陷的影响。采用变换器模型将序列模拟字段推向新的方向。在这个项目中,我们采用了基于3DCNN的变换模型,例如C3D和双流I3D用于视频提取。我们还应用了某些降低维度的技术,以便将模型的总体规模限制在一定范围内。我们最后在用于单元和单元视频说明任务的MSVD和活动网络数据集上介绍了我们的结果。