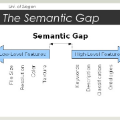

Previous approaches in singer identification have used one of monophonic vocal tracks or mixed tracks containing multiple instruments, leaving a semantic gap between these two domains of audio. In this paper, we present a system to learn a joint embedding space of monophonic and mixed tracks for singing voice. We use a metric learning method, which ensures that tracks from both domains of the same singer are mapped closer to each other than those of different singers. We train the system on a large synthetic dataset generated by music mashup to reflect real-world music recordings. Our approach opens up new possibilities for cross-domain tasks, e.g., given a monophonic track of a singer as a query, retrieving mixed tracks sung by the same singer from the database. Also, it requires no additional vocal enhancement steps such as source separation. We show the effectiveness of our system for singer identification and query-by-singer in both the same-domain and cross-domain tasks.

翻译:先前的歌唱识别方法使用了含有多个乐器的单声声轨或混合音轨之一,在音频的两个领域之间留下了语义差距。 在本文中, 我们提出了一个系统, 用于学习联合嵌入一声和混合音轨的空间, 用于歌唱声。 我们使用一种衡量学习方法, 以确保同一歌手的两个领域的音轨与不同歌手的音轨相近。 我们用音乐混音生成的大型合成数据集对系统进行培训, 以反映真实世界的音乐录音。 我们的方法打开了跨音域任务的新的可能性, 比如, 给同一位歌手以单声道作为查询, 从数据库中检索同一位歌手的混合音轨。 此外, 我们不需要额外的声音强化步骤, 比如源分离。 我们展示了我们同声识别和逐声带的系统在相同区域和跨域任务中的有效性 。