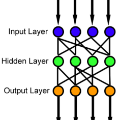

We propose a modular extension of the backpropagation algorithm for computation of the block diagonal of the training objective's Hessian to various levels of refinement. The approach compartmentalizes the otherwise tedious construction of the Hessian into local modules. It is applicable to feedforward neural network architectures, and can be integrated into existing machine learning libraries with relatively little overhead, facilitating the development of novel second-order optimization methods. Our formulation subsumes several recently proposed block-diagonal approximation schemes as special cases. Our PyTorch implementation is included with the paper.

翻译:我们提出将培训目标赫西安语区段对角算法的模块化扩展,以计算培训目标赫西安语区段对角法的不同改进程度。 这种方法将赫西安语区原为乏味的构造分割为本地模块。 它适用于向前神经网络结构提供饲料,并可纳入现有机学图书馆,其管理费用相对较少,有助于开发新型的二级优化方法。 我们的配方将最近提出的若干块对角近似方案作为特殊案例进行分包。 我们的皮托尔奇计划的实施也包含在文件中。