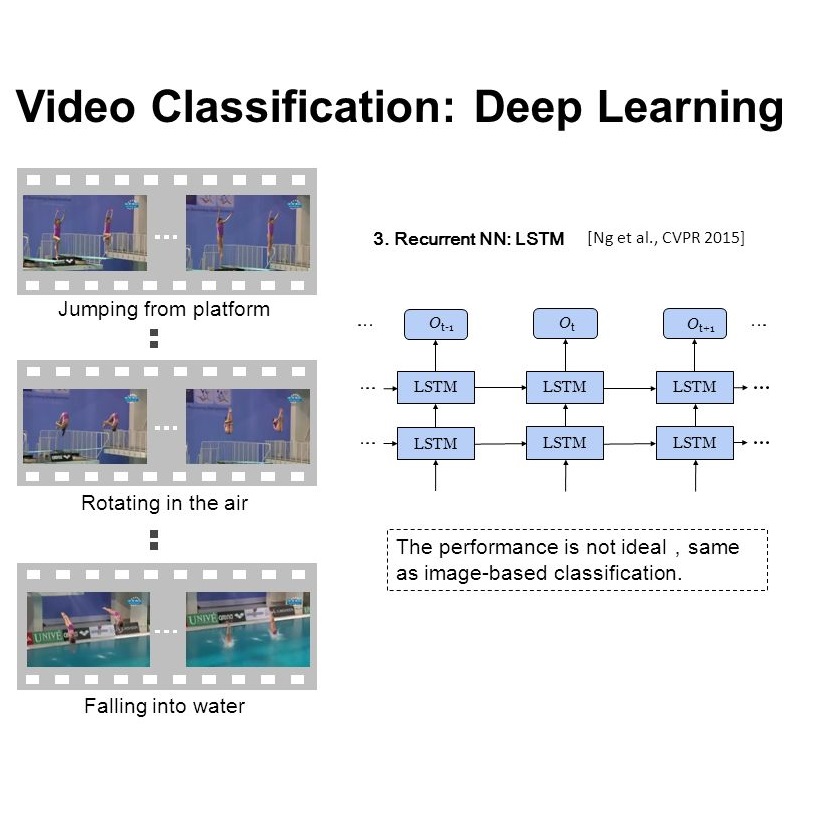

Videos have become ubiquitous on the Internet. And video analysis can provide lots of information for detecting and recognizing objects as well as help people understand human actions and interactions with the real world. However, facing data as huge as TB level, effective methods should be applied. Recurrent neural network (RNN) architecture has wildly been used on many sequential learning problems such as Language Model, Time-Series Analysis, etc. In this paper, we propose some variations of RNN such as stacked bidirectional LSTM/GRU network with attention mechanism to categorize large-scale video data. We also explore different multimodal fusion methods. Our model combines both visual and audio information on both video and frame level and received great result. Ensemble methods are also applied. Because of its multimodal characteristics, we decide to call this method Deep Multimodal Learning(DML). Our DML-based model was trained on Google Cloud and our own server and was tested in a well-known video classification competition on Kaggle held by Google.

翻译:视频分析可以提供大量信息,用于探测和识别物体,帮助人们了解人类行为和与现实世界的相互作用。然而,面对像肺结核水平这样的巨大数据,应当采用有效的方法。经常神经网络(RNN)结构被疯狂地用于许多连续学习问题,如语言模型、时间-关系分析等。在本文中,我们提议了RNN的一些变异,如堆叠双向LSTM/GRU网络,关注大规模视频数据分类机制。我们还探索了不同的多式联运聚合方法。我们的模型将视频和框架水平的视觉和音频信息结合起来,并获得了巨大结果。还应用了组合方法。由于其多式特性,我们决定将其称为“深多模式学习 ” (DML) 。我们的DML模型在谷歌云和我们自己的服务器上进行了培训,并在谷歌举行的Kaggle的著名视频分类竞赛中进行了测试。