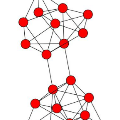

Most existing word embedding approaches do not distinguish the same words in different contexts, therefore ignoring their contextual meanings. As a result, the learned embeddings of these words are usually a mixture of multiple meanings. In this paper, we acknowledge multiple identities of the same word in different contexts and learn the \textbf{identity-sensitive} word embeddings. Based on an identity-labeled text corpora, a heterogeneous network of words and word identities is constructed to model different-levels of word co-occurrences. The heterogeneous network is further embedded into a low-dimensional space through a principled network embedding approach, through which we are able to obtain the embeddings of words and the embeddings of word identities. We study three different types of word identities including topics, sentiments and categories. Experimental results on real-world data sets show that the identity-sensitive word embeddings learned by our approach indeed capture different meanings of words and outperforms competitive methods on tasks including text classification and word similarity computation.

翻译:大多数现有的嵌入字词方法在不同背景中并不区分相同的词,因此忽略了它们的背景含义。 因此, 这些字所学的嵌入通常是多种含义的混合体。 在本文中, 我们承认不同背景中同一词的多重特性, 并学习了 \ textbf{ 身份敏感} 字嵌入。 基于身份标签的文本组合, 构建了一个不同的词和字身份网络, 以模拟不同层次的单词共发。 混成的网络通过一个原则性网络嵌入法进一步嵌入一个低维空间, 通过这个方法, 我们能够获得文字嵌入和单词身份嵌入。 我们研究了三种不同的单词特性, 包括主题、 情绪和类别。 真实世界数据集的实验结果显示, 通过我们的方法所学到的身份敏感字嵌入的词确实捕捉了不同的文字含义, 并且超越了包括文本分类和类似词的计算在内的任务的竞争方法。