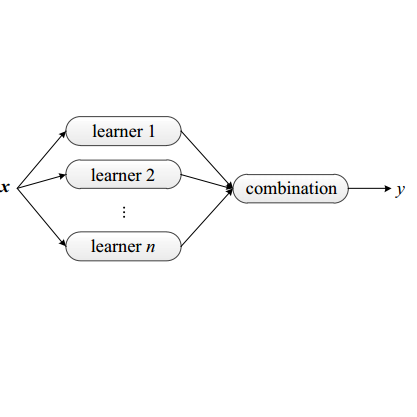

Ensemble learning is a mainstay in modern data science practice. Conventional ensemble algorithms assigns to base models a set of deterministic, constant model weights that (1) do not fully account for variations in base model accuracy across subgroups, nor (2) provide uncertainty estimates for the ensemble prediction, which could result in mis-calibrated (i.e. precise but biased) predictions that could in turn negatively impact the algorithm performance in real-word applications. In this work, we present an adaptive, probabilistic approach to ensemble learning using dependent tail-free process as ensemble weight prior. Given input feature $\mathbf{x}$, our method optimally combines base models based on their predictive accuracy in the feature space $\mathbf{x} \in \mathcal{X}$, and provides interpretable uncertainty estimates both in model selection and in ensemble prediction. To encourage scalable and calibrated inference, we derive a structured variational inference algorithm that jointly minimize KL objective and the model's calibration score (i.e. Continuous Ranked Probability Score (CRPS)). We illustrate the utility of our method on both a synthetic nonlinear function regression task, and on the real-world application of spatio-temporal integration of particle pollution prediction models in New England.

翻译:集合学习是现代数据科学实践的支柱。 常规混合算法将一套确定性、 恒定的模型加权数分配给基准模型,这些模型重数:(1) 不完全顾及各分组之间基准模型准确性的变化, 也不为共同预测提供不确定性的估计数, 这可能会导致错误校准( 精确但有偏差) 预测, 反过来又可能对实际应用中的算法性效绩产生消极影响。 在这项工作中, 我们提出了一个适应性、 概率性方法, 利用依赖性无尾部进程作为混合重量来进行共通性学习。 鉴于投入的特性 $\ mathbf{x} $\ mathbf{x} 美元, 我们的方法最优化地结合了基础模型模型, 以其在地貌空间 $\mathbf{x} 的预测性准确性能为基础, 可能导致在模型选择和堆积预测中产生可解释的不确定性估计值。 为了鼓励可扩缩和校准, 我们得出一种结构化的变法, 共同将KL目标和模型的校准性模型的校准性标准, 我们的精确度的轨道的模型的校正的校正的校正的校正的校正的校正的校正的校正法, 。