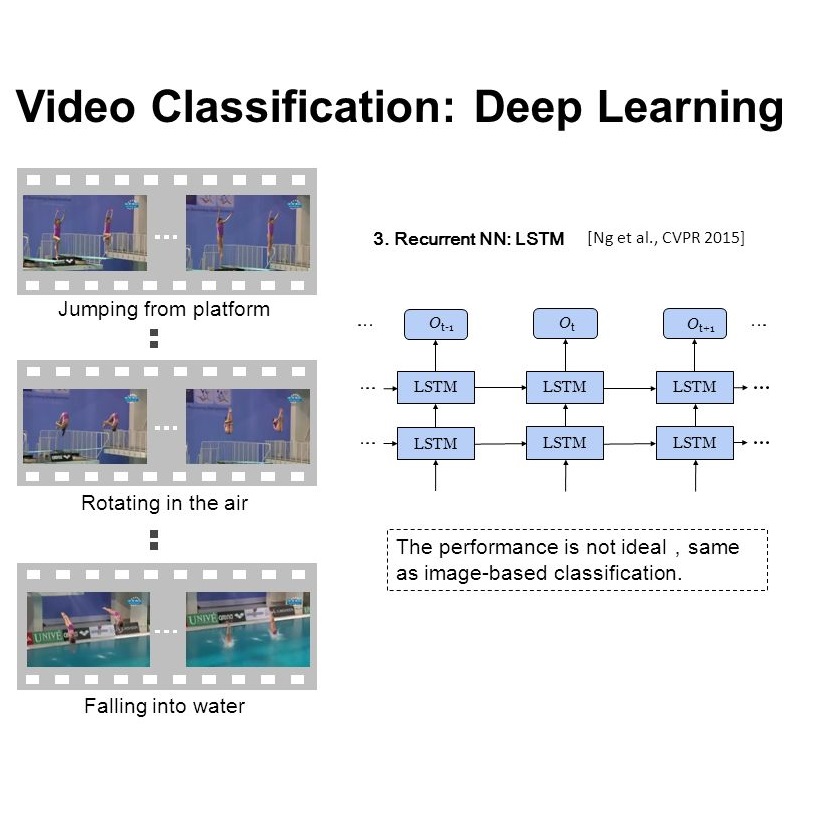

There have been many efforts in attacking image classification models with adversarial perturbations, but the same topic on video classification has not yet been thoroughly studied. This paper presents a novel idea of video-based attack, which appends a few dummy frames (e.g., containing the texts of `thanks for watching') to a video clip and then adds adversarial perturbations only on these new frames. Our approach enjoys three major benefits, namely, a high success rate, a low perceptibility, and a strong ability in transferring across different networks. These benefits mostly come from the common dummy frame which pushes all samples towards the boundary of classification. On the other hand, such attacks are easily to be concealed since most people would not notice the abnormality behind the perturbed video clips. We perform experiments on two popular datasets with six state-of-the-art video classification models, and demonstrate the effectiveness of our approach in the scenario of universal video attacks.

翻译:在以对抗性扰动攻击图像分类模型方面已经做出了许多努力,但是关于视频分类的同一专题尚未进行彻底研究。本文提出了一个以视频为基础的攻击的新概念,它把几个假框架(例如,含有“观看的感谢书”的文本)附在视频剪辑中,然后只对这些新框架增加了对抗性扰动。我们的方法有三大好处,即高成功率、低可见度和在不同网络之间传输的强大能力。这些好处主要来自将所有样本推向分类界限的普通假框架。另一方面,这种攻击很容易被隐藏,因为大多数人不会注意到扰动视频剪辑背后的异常情况。我们用六种最先进的视频分类模型对两种流行数据集进行实验,并展示我们在普遍视频攻击情况下采用的方法的有效性。