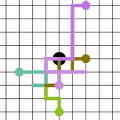

A range of video modeling tasks, from optical flow to multiple object tracking, share the same fundamental challenge: establishing space-time correspondence. Yet, approaches that dominate each space differ. We take a step towards bridging this gap by extending the recent contrastive random walk formulation to much denser, pixel-level space-time graphs. The main contribution is introducing hierarchy into the search problem by computing the transition matrix between two frames in a coarse-to-fine manner, forming a multiscale contrastive random walk when extended in time. This establishes a unified technique for self-supervised learning of optical flow, keypoint tracking, and video object segmentation. Experiments demonstrate that, for each of these tasks, the unified model achieves performance competitive with strong self-supervised approaches specific to that task. Project site: https://jasonbian97.github.io/flowwalk

翻译:从光学流动到多天体跟踪等一系列视频模拟任务都面临相同的基本挑战:建立时空通信。然而,支配每个空间的方法各不相同。我们通过将最近的对比随机行走配方扩展至密度大得多的像素级空间时空图,朝着弥合这一差距迈出了一步。主要贡献是以粗略到松散的方式计算两个框架之间的过渡矩阵,从而在时间延长时形成一种多尺度的对比随机行走。这为光学流动、关键点跟踪和视频物体分割的自我监督学习确立了一种统一技术。实验表明,对于其中每一项任务,统一模型都具有很强的自我监督方法的竞争力。项目网站:https://jasonbian97.github.io/flowwalk。项目网站:https://jasonbian97.github. io/flowwalk。项目网站:https://jasonbianb. github. io/flowwalk。