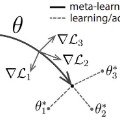

Most gradient-based approaches to meta-learning do not explicitly account for the fact that different parts of the underlying model adapt by different amounts when applied to a new task. For example, the input layers of an image classification convnet typically adapt very little, while the output layers can change significantly. This can cause parts of the model to begin to overfit while others underfit. To address this, we introduce a hierarchical Bayesian model with per-module shrinkage parameters, which we propose to learn by maximizing an approximation of the predictive likelihood using implicit differentiation. Our algorithm subsumes Reptile and outperforms variants of MAML on two synthetic few-shot meta-learning problems.

翻译:多数基于梯度的元学习方法并未明确说明一个事实,即基础模型的不同部分在应用到新任务时会以不同数量适应不同的数量。例如,图像分类锥体的输入层通常适应性很低,而产出层则可能发生重大变化。这可能导致模型的某些部分开始过度适应,而另一些部分则不适应。为了解决这个问题,我们引入了一个带有单模块缩水参数的等级贝叶斯模型,我们建议通过利用隐含的区别最大限度地近似预测可能性来学习这一模型。我们的算法子组合对两个合成微小的元学习问题进行了反射和超演化。