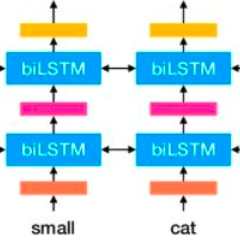

Evaluating model robustness is critical when developing trustworthy models not only to gain deeper understanding of model behavior, strengths, and weaknesses, but also to develop future models that are generalizable and robust across expected environments a model may encounter in deployment. In this paper we present a framework for measuring model robustness for an important but difficult text classification task - deceptive news detection. We evaluate model robustness to out-of-domain data, modality-specific features, and languages other than English. Our investigation focuses on three type of models: LSTM models trained on multiple datasets(Cross-Domain), several fusion LSTM models trained with images and text and evaluated with three state-of-the-art embeddings, BERT ELMo, and GloVe (Cross-Modality), and character-level CNN models trained on multiple languages (Cross-Language). Our analyses reveal a significant drop in performance when testing neural models on out-of-domain data and non-English languages that may be mitigated using diverse training data. We find that with additional image content as input, ELMo embeddings yield significantly fewer errors compared to BERT orGLoVe. Most importantly, this work not only carefully analyzes deception model robustness but also provides a framework of these analyses that can be applied to new models or extended datasets in the future.

翻译:在开发值得信赖的模型时,评估模型的稳健性至关重要,不仅是为了加深对模型行为、强项和弱项的理解,而且是为了开发未来模型,这些模型在预期环境中是普遍适用和稳健的。在本文件中,我们提出了一个框架,用于衡量模型稳健性,以进行重要而困难的文本分类任务 -- -- 欺骗性新闻探测。我们评价模型稳健性,以进行外部数据、具体模式特征和英语以外的语言。我们的调查侧重于三种类型的模型:LSTM模型,在多个数据集(Cross-Domain)上受过培训的LSTM模型,几个经过图像和文字培训的组合LSTM模型,以及三个最先进的嵌入模型(BERT ELM和GloVe(Cross-Modality)和GloVe(Cros-Modality)),以及用多种语言(Cross-Language)培训的字符级CNN模型。我们的分析显示,在测试神经模型和非英语模型时业绩有显著的下降。我们发现,使用不同的培训数据数据发现,更多的图像内容作为投入,ELMoLOMG(Mestiming)也能分析可以大大地进行这种模拟分析,但这种分析可以大大地进行这种分析。