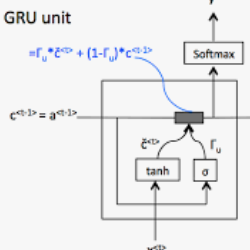

This paper is dedicated to team VAA's approach submitted to the Fashion-IQ challenge in CVPR 2020. Given a pair of the image and the text, we present a novel multimodal composition method, RTIC, that can effectively combine the text and the image modalities into a semantic space. We extract the image and the text features that are encoded by the CNNs and the sequential models (e.g., LSTM or GRU), respectively. To emphasize the meaning of the residual of the feature between the target and candidate, the RTIC is composed of N-blocks with channel-wise attention modules. Then, we add the encoded residual to the feature of the candidate image to obtain a synthesized feature. We also explored an ensemble strategy with variants of models and achieved a significant boost in performance comparing to the best single model. Finally, our approach achieved 2nd place in the Fashion-IQ 2020 Challenge with a test score of 48.02 on the leaderboard.

翻译:本文专门介绍VAA团队在2020年CVPR中向时装-IQ挑战提交的方法。根据一对图像和文本,我们展示了一种新型多式联运组成方法,即RTIC,它可以有效地将文字和图像模式结合到语义空间中,我们分别提取CNN和顺序模型(如LSTM或GRU)编码的图像和文字特征。为了强调目标与候选人之间特征剩余部分的含义,RETIC由带有频道关注模块的N区块组成。然后,我们在候选图像的特征中添加编码的剩余部分,以获得一个合成特征。我们还探索了带有模型变体的混合战略,并取得了与最佳单一模型(如LSTM或GRU)相比的显著提高绩效。最后,我们的方法在Fashason-IQ2020挑战中达到了第二位,领先板上测试分为48.02。