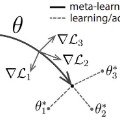

Neural networks are known to suffer from catastrophic forgetting when trained on sequential datasets. While there have been numerous attempts to solve this problem for large-scale supervised classification, little has been done to overcome catastrophic forgetting for few-shot classification problems. We demonstrate that the popular gradient-based few-shot meta-learning algorithm Model-Agnostic Meta-Learning (MAML) indeed suffers from catastrophic forgetting and introduce a Bayesian online meta-learning framework that tackles this problem. Our framework incorporates MAML into a Bayesian online learning algorithm with Laplace approximation. This framework enables few-shot classification on a range of sequentially arriving datasets with a single meta-learned model. The experimental evaluations demonstrate that our framework can effectively prevent forgetting in various few-shot classification settings compared to applying MAML sequentially.

翻译:已知神经网络在接受连续数据集培训时会遭受灾难性的遗忘。 虽然在大规模监督分类方面曾多次尝试解决这一问题,但几乎没有做多少工作来克服灾难性的忽略,解决几发分类问题。我们证明,流行的基于梯度的微粒元学习算法模型-不可知元学习(MAML)确实遭受灾难性的遗忘,并引入了解决该问题的巴伊斯在线元学习框架。我们的框架将MAML纳入一个配有拉普尔近似的巴伊西亚在线学习算法中。这个框架使得能够用单一元学模型对一系列按顺序到达的数据集进行几分解。实验性评估表明,与按顺序应用MAML相比,我们的框架可以有效防止在几发分类设置中忘记。