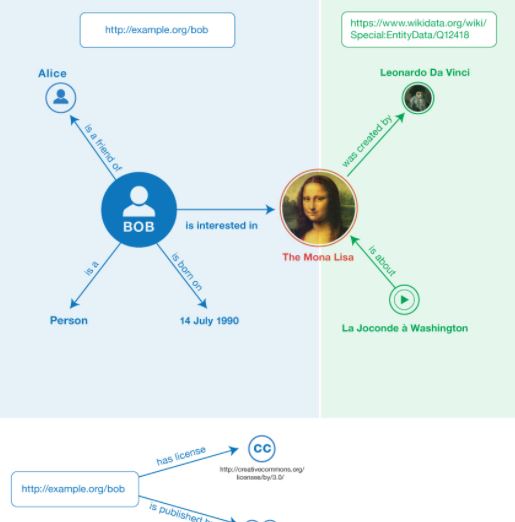

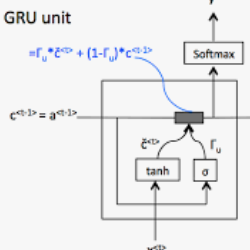

Traditionally, most data-to-text applications have been designed using a modular pipeline architecture, in which non-linguistic input data is converted into natural language through several intermediate transformations. In contrast, recent neural models for data-to-text generation have been proposed as end-to-end approaches, where the non-linguistic input is rendered in natural language with much less explicit intermediate representations in-between. This study introduces a systematic comparison between neural pipeline and end-to-end data-to-text approaches for the generation of text from RDF triples. Both architectures were implemented making use of state-of-the art deep learning methods as the encoder-decoder Gated-Recurrent Units (GRU) and Transformer. Automatic and human evaluations together with a qualitative analysis suggest that having explicit intermediate steps in the generation process results in better texts than the ones generated by end-to-end approaches. Moreover, the pipeline models generalize better to unseen inputs. Data and code are publicly available.

翻译:传统上,大多数数据到文字应用都使用模块化管道结构设计,其中非语言输入数据通过若干中间转换转换转化为自然语言,而最近的数据到文字生成神经模型则是作为端到端方法提出的,其中以自然语言提供非语言输入,中间表示的中间表示要少得多。本研究对神经管道和终端到终端数据到文字方法进行系统比较,以产生RDF三重文字。这两个结构的实施都利用了最先进的深层次学习方法,作为编码器-脱coder Gated-Reversion 单元和变异器。自动和人类评价,连同定性分析表明,在生成过程中采取明确的中间步骤,其文本比端到端方法产生的文本要好得多。此外,管道模型还更普遍地使用看不见的投入。数据和代码是公开提供的。