GANs最新综述论文: 生成式对抗网络及其变种如何有用【附41页pdf下载】

【导读】最近一期的计算机顶级期刊ACM Computing Surveys (CSUR)出版,涵盖最新的GANs综述论文,146篇参考文献, 本文的作者来自首尔大学数据科学与人工智能实验室的师生,研究方向为深度学习和机器学习。本综述论文介绍了GAN的原理和应用。

介绍

生成对抗网络(GAN)在机器学习领域受到广泛关注,因为它们有可能学习高维,复杂的实际数据分布。具体而言,它们不依赖于关于分布的任何假设,并且可以以简单的方式从潜在空间生成真实样本。这种强大的属性使GAN可以应用于各种应用,如图像合成,图像属性编辑,图像翻译,领域适应和其他学术领域。在本文中,作者从各个角度探讨GAN的细节。此外,作者还解释了GAN如何运作以及最近提出的各种目标函数的基本含义。然后,作者将重点放在如何将GAN与自动编码器框架相结合。最后,作者列举了适用于各种任务和其他领域的GAN变体,适用于那些有兴趣利用GAN进行研究的人。

文章共分6个章节。

文章结构概述

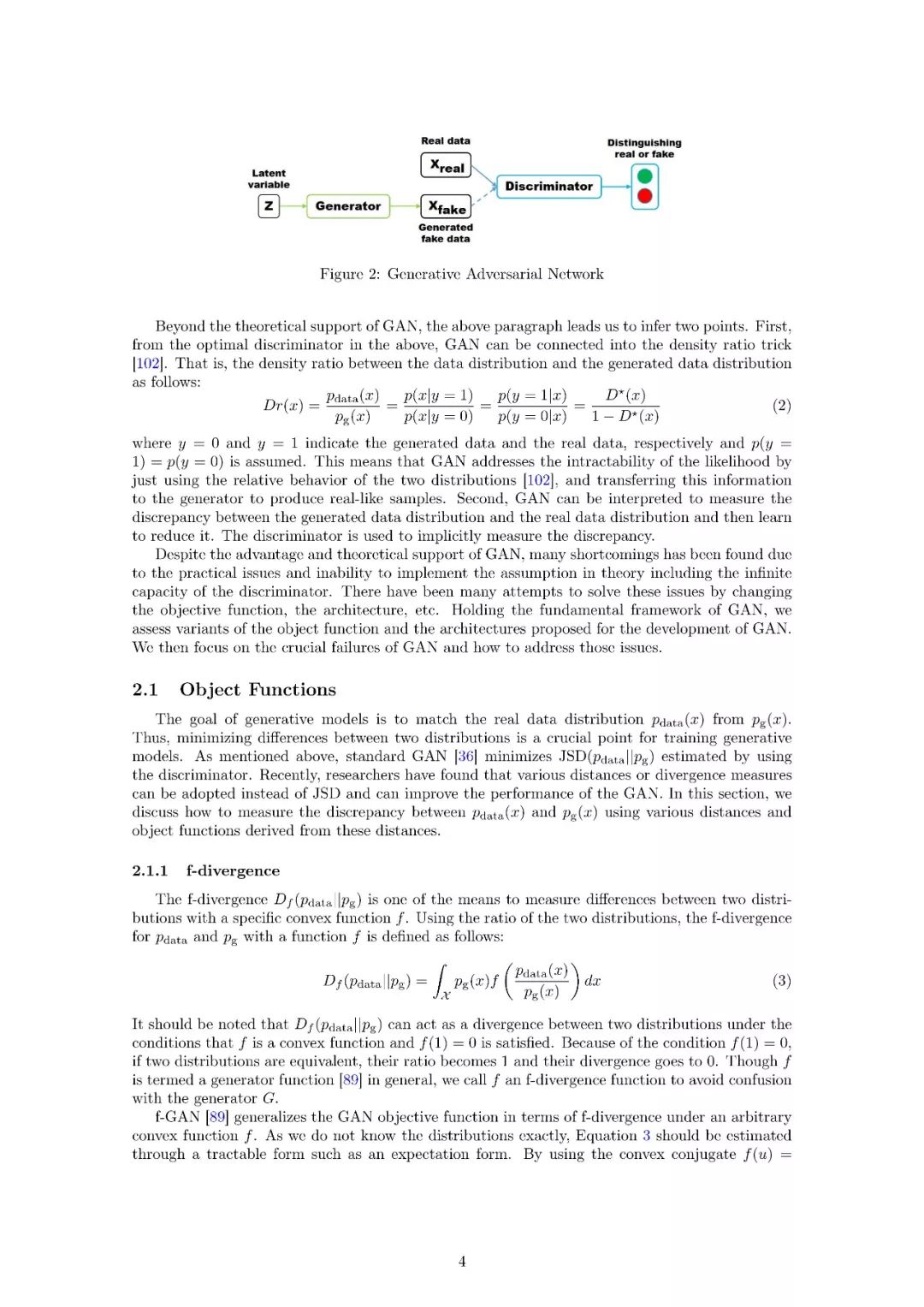

GAN的目标函数已经它各个部分是如何工作的;近几年新提出的目标函数

GAN如何应用于从低维数据表示中学习一个隐空间

GAN的实际应用领域

GAN为什么比其他模型好

文章总结

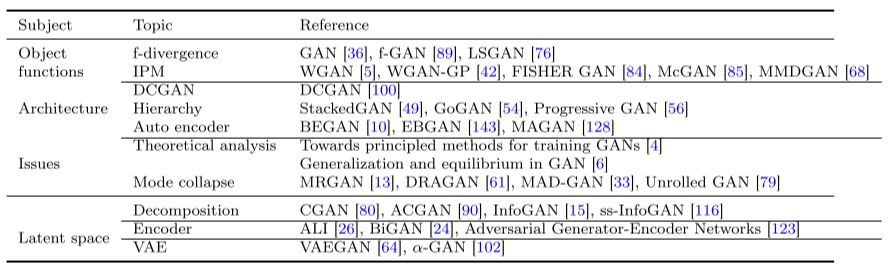

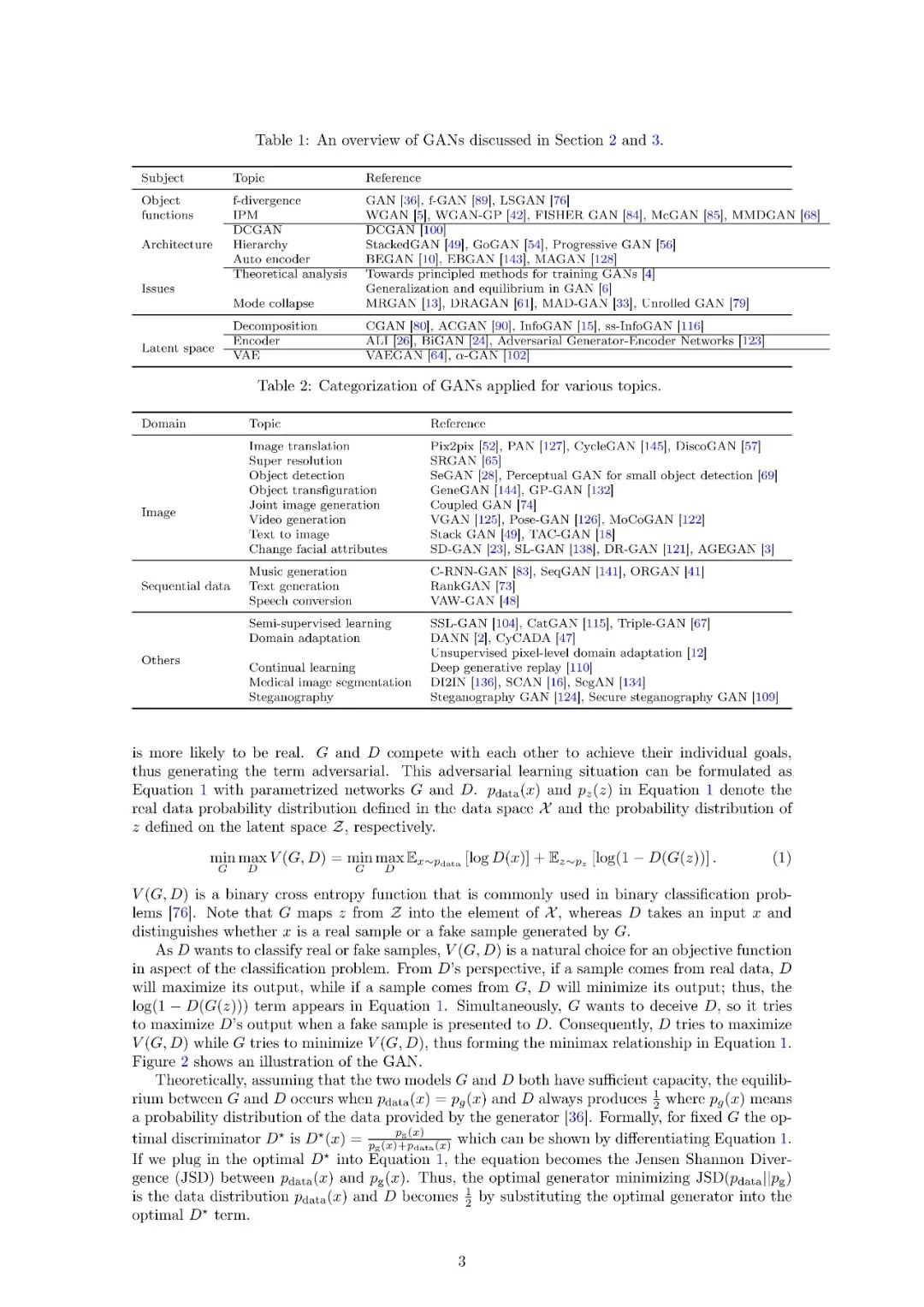

关于GAN的结构的一些论文总结

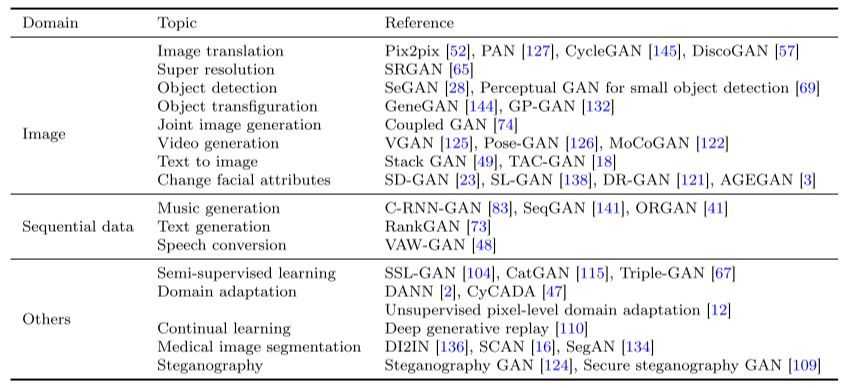

关于GAN在不同领域与研究主题中的应用的论文总结

论文地址:

https://arxiv.org/abs/1711.05914

作者:Yongjun Hong,Uiwon Hwang,Jaeyoon Yoo,Sungroh Yoon

作者主页:

https://dblp.uni-trier.de/pers/hd/h/Hong:Yongjun

其他更多详情,下载全文查看:

请关注专知公众号(扫一扫最下面专知二维码,或者点击上方蓝色专知)

后台回复“GANVW” 就可以获取论文的下载链接~

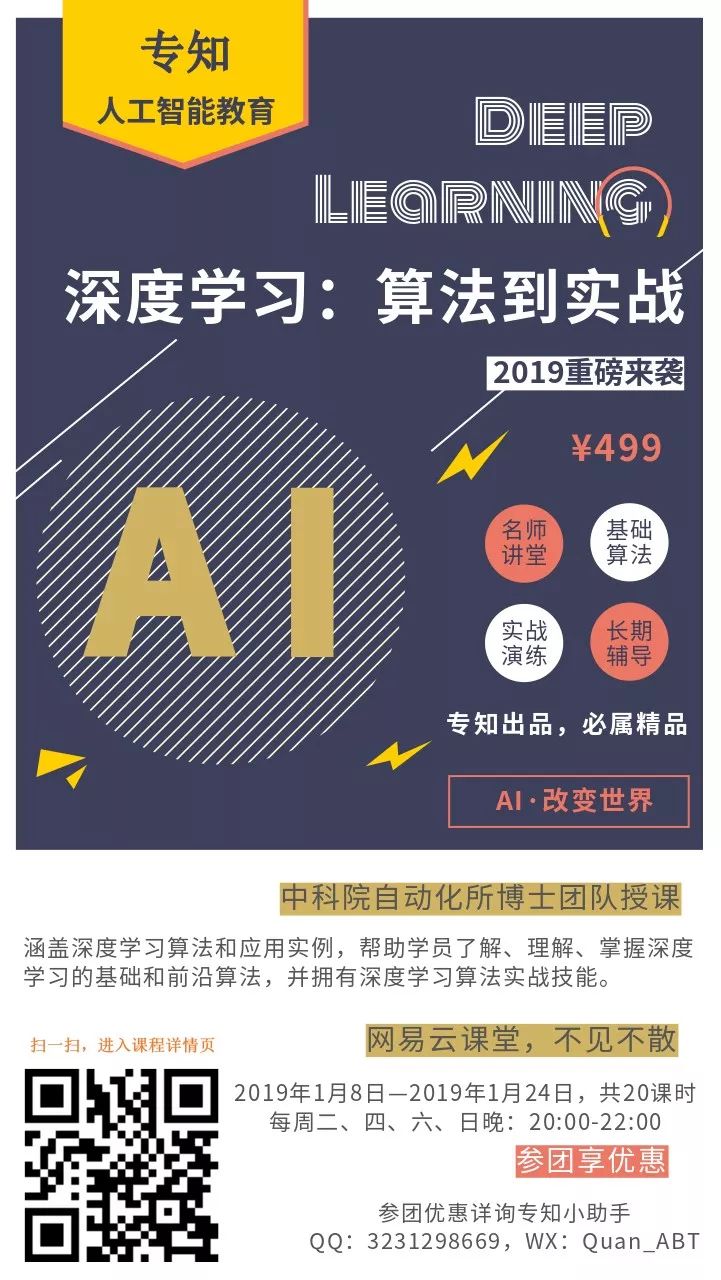

专知2019年1月将开设一门《深度学习:算法到实战》讲述相关GANs,欢迎报名!

专知开课啦!《深度学习: 算法到实战》, 中科院博士为你讲授!

-END-

专 · 知

专知开课啦!《深度学习: 算法到实战》, 中科院博士为你讲授!

请加专知小助手微信(扫一扫如下二维码添加),咨询《深度学习:算法到实战》参团限时优惠报名~

欢迎微信扫一扫加入专知人工智能知识星球群,获取专业知识教程视频资料和与专家交流咨询!

请PC登录www.zhuanzhi.ai或者点击阅读原文,注册登录专知,获取更多AI知识资料!

点击“阅读原文”,了解报名专知《深度学习:算法到实战》课程