成为VIP会员查看完整内容

VIP会员码认证

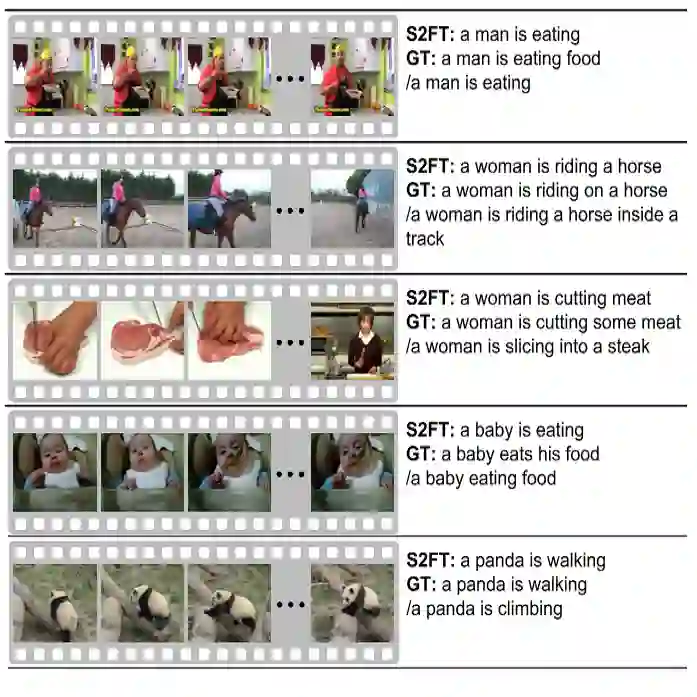

视频描述生成(Video Caption),就是从视频中自动生成一段描述性文字

视频描述生成(Video Captioning)专知荟萃

入门学习

- Video Analysis 相关领域介绍之Video Captioning(视频to文字描述)

- 让机器读懂视频

- 梅涛:“看图说话”——人类走开,我AI来

- 深度三维残差神经网络:视频理解新突破

- Word2VisualVec for Video-To-Text Matching and Ranking

进阶文章

2015

- Jeff Donahue, Lisa Anne Hendricks, Sergio Guadarrama, Marcus Rohrbach, Subhashini Venugopalan, Kate Saenko, Trevor Darrell, Long-term Recurrent Convolutional Networks for Visual Recognition and Description, CVPR, 2015.

- [http://arxiv.org/pdf/1411.4389.pdf] - Subhashini Venugopalan, Huijuan Xu, Jeff Donahue, Marcus Rohrbach, Raymond Mooney, Kate Saenko, Translating Videos to Natural Language Using Deep Recurrent Neural Networks, arXiv:1412.4729.

- UT / UML / Berkeley [http://arxiv.org/pdf/1412.4729]

- Yingwei Pan, Tao Mei, Ting Yao, Houqiang Li, Yong Rui, Joint Modeling Embedding and Translation to Bridge Video and Language, arXiv:1505.01861.

- Microsoft [http://arxiv.org/pdf/1505.01861]

- Subhashini Venugopalan, Marcus Rohrbach, Jeff Donahue, Raymond Mooney, Trevor Darrell, Kate Saenko, Sequence to Sequence--Video to Text, arXiv:1505.00487.

- UT / Berkeley / UML [http://arxiv.org/pdf/1505.00487]

- Li Yao, Atousa Torabi, Kyunghyun Cho, Nicolas Ballas, Christopher Pal, Hugo Larochelle, Aaron Courville, Describing Videos by Exploiting Temporal Structure, arXiv:1502.08029

- Univ. Montreal / Univ. Sherbrooke [http://arxiv.org/pdf/1502.08029.pdf]]

- Anna Rohrbach, Marcus Rohrbach, Bernt Schiele, The Long-Short Story of Movie Description, arXiv:1506.01698

- MPI / Berkeley [http://arxiv.org/pdf/1506.01698.pdf]]

- Yukun Zhu, Ryan Kiros, Richard Zemel, Ruslan Salakhutdinov, Raquel Urtasun, Antonio Torralba, Sanja Fidler, Aligning Books and Movies: Towards Story-like Visual Explanations by Watching Movies and Reading Books, arXiv:1506.06724

- Univ. Toronto / MIT [[http://arxiv.org/pdf/1506.06724.pdf]]

- Kyunghyun Cho, Aaron Courville, Yoshua Bengio, Describing Multimedia Content using Attention-based Encoder-Decoder Networks, arXiv:1507.01053

- Univ. Montreal [http://arxiv.org/pdf/1507.01053.pdf]

2016

- Multimodal Video Description

- Describing Videos using Multi-modal Fusion

- Andrew Shin , Katsunori Ohnishi , Tatsuya Harada Beyond caption to narrative: Video captioning with multiple sentences

- Jianfeng Dong, Xirong Li, Cees G. M. Snoek Word2VisualVec: Image and Video to Sentence Matching by Visual Feature Prediction

2017

- Dotan Kaufman, Gil Levi, Tal Hassner, Lior Wolf, Temporal Tessellation for Video Annotation and Summarization, arXiv:1612.06950.

- TAU / USC [[https://arxiv.org/pdf/1612.06950.pdf]]

- Chiori Hori, Takaaki Hori, Teng-Yok Lee, Kazuhiro Sumi, John R. Hershey, Tim K. Marks Attention-Based Multimodal Fusion for Video Description

- Weakly Supervised Dense Video Captioning(CVPR2017)

- Multi-Task Video Captioning with Video and Entailment Generation(ACL2017)

- Multimodal Memory Modelling for Video Captioning, Junbo Wang, Wei Wang, Yan Huang, Liang Wang, Tieniu Tan - [https://arxiv.org/abs/1611.05592]

- Xiaodan Liang, Zhiting Hu, Hao Zhang, Chuang Gan, Eric P. Xing Recurrent Topic-Transition GAN for Visual Paragraph Generation

- MAM-RNN: Multi-level Attention Model Based RNN for Video Captioning Xuelong Li1 , Bin Zhao2 , Xiaoqiang Lu1

Tutorial

- “Bridging Video and Language with Deep Learning,” Invited tutorial at ECCV-ACM Multimedia, Amsterdam, The Netherlands, Oct. 2016.

- ICIP-2017-Tutorial-Video-and-Language-Pub

代码

- neuralvideo

- Translating Videos to Natural Language Using Deep Recurrent Neural Networks

- Describing Videos by Exploiting Temporal Structure

- SA-tensorflow: Soft attention mechanism for video caption generation

- Sequence to Sequence -- Video to Text

领域专家

- 梅涛 微软亚洲研究院资深研究员 梅涛博士,微软亚洲研究院资深研究员,国际模式识别学会会士,美国计算机协会杰出科学家,中国科技大学和中山大学兼职教授博导。主要研究兴趣为多媒体分析、计算机视觉和机器学习。 - [https://www.microsoft.com/en-us/research/people/tmei/]

- Xirong Li 李锡荣 中国人民大学数据工程与知识工程教育部重点实验室副教授、博士生导师。

- Jiebo Luo IEEE/SPIE Fellow、长江讲座美国罗彻斯特大学教授

- Subhashini Venugopalan

Datasets

- MSR-VTT dataset 该数据集为ACM Multimedia 2016 的 Microsoft Research - Video to Text (MSR-VTT) Challenge。地址为 Microsoft Multimedia Challenge 。该数据集包含10000个视频片段(video clip),被分为训练,验证和测试集三部分。每个视频片段都被标注了大概20条英文句子。此外,MSR-VTT还提供了每个视频的类别信息(共计20类),这个类别信息算是先验的,在测试集中也是已知的。同时,视频都是包含音频信息的。该数据库共计使用了四种机器翻译的评价指标,分别为:METEOR, BLEU@1-4,ROUGE-L,CIDEr。

- YouTube2Text dataset(or called MSVD dataset) 该数据集同样由Microsoft Research提供,地址为 Microsoft Research Video Description Corpus 。该数据集包含1970段YouTube视频片段(时长在10-25s之间),每段视频被标注了大概40条英文句子。

初步版本,水平有限,有错误或者不完善的地方,欢迎大家提建议和补充,会一直保持更新,本文为专知内容组原创内容,未经允许不得转载,如需转载请发送邮件至fangquanyi@gmail.com 或 联系微信专知小助手(Rancho_Fang)

敬请关注http://www.zhuanzhi.ai 和关注专知公众号,获取第一手AI相关知识

成为VIP会员查看完整内容

参考链接

荟萃目录

提示

微信扫码咨询专知VIP会员与技术项目合作 (加微信请备注: "专知") ![]()