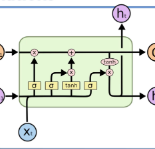

We introduce an architecture to learn joint multilingual sentence representations for 93 languages, belonging to more than 30 different language families and written in 28 different scripts. Our system uses a single BiLSTM encoder with a shared BPE vocabulary for all languages, which is coupled with an auxiliary decoder and trained on publicly available parallel corpora. This enables us to learn a classifier on top of the resulting sentence embeddings using English annotated data only, and transfer it to any of the 93 languages without any modification. Our approach sets a new state-of-the-art on zero-shot cross-lingual natural language inference for all the 14 languages in the XNLI dataset but one. We also achieve very competitive results in cross-lingual document classification (MLDoc dataset). Our sentence embeddings are also strong at parallel corpus mining, establishing a new state-of-the-art in the BUCC shared task for 3 of its 4 language pairs. Finally, we introduce a new test set of aligned sentences in 122 languages based on the Tatoeba corpus, and show that our sentence embeddings obtain strong results in multilingual similarity search even for low-resource languages. Our PyTorch implementation, pre-trained encoder and the multilingual test set will be freely available.

翻译:我们引入了用于学习93种语言的多语种联合句式的架构,这些语言属于30多个不同的语言家庭,以28种不同的文字写成。我们的系统使用单一的BILSTM编码器,所有语言都使用BPE词汇,配有辅助解码器,并接受公开提供的平行公司培训。这使我们能够在由此产生的句子上学习一个分类器,仅使用英文附加说明数据,然后不加任何修改地将其转移到93种语言中的任何一种语言。我们的方法为XNLI数据集中所有14种语言的零弹射跨语种自然语法推理设置了一个新的最新水平的状态。我们还在跨语言文件分类(MLDDoc数据集)中取得了非常有竞争力的结果。我们的句子嵌入在平行体挖掘中也很强大,为英国天主教大学的4种语言中的3种共同任务建立了一个新的状态。最后,我们根据塔托巴文将推出一套122种语言的新的统一句子,并表明我们的句子插入前在多语种相似性搜索中获得了强有力的成果,甚至可以自由测试低资源语言的系统。