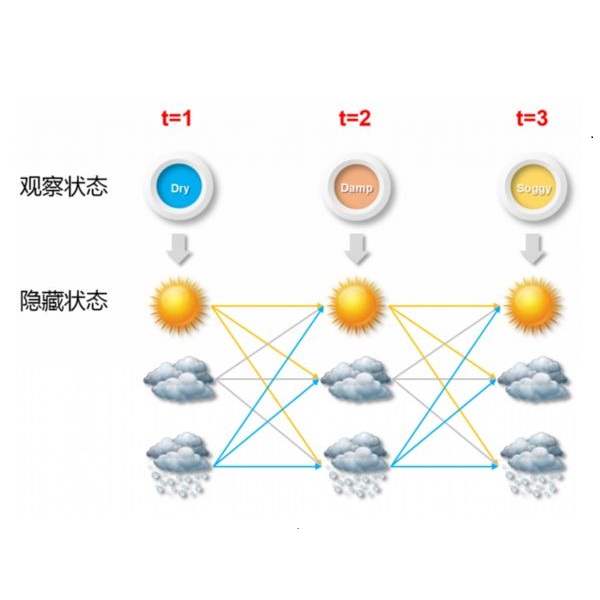

We present a new probabilistic graphical model which generalizes factorial hidden Markov models (FHMM) for the problem of single-channel speech separation (SCSS) in which we wish to separate the two speech signals $X(t)$ and $V(t)$ from a single recording of their mixture $Y(t)=X(t)+V(t)$ using the trained models of the speakers' speech signals. Current techniques assume the data used in the training and test phases of the separation model have the same loudness. In this paper, we introduce GFHMM, gain adapted FHMM, to extend SCSS to the general case in which $Y(t)=g_xX(t)+g_vV(t)$, where $g_x$ and $g_v$ are unknown gain factors. GFHMM consists of two independent-state HMMs and a hidden node which model spectral patterns and gain difference, respectively. A novel inference method is presented using the Viterbi algorithm and quadratic optimization with minimal computational overhead. Experimental results, conducted on 180 mixtures with gain differences from 0 to 15~dB, show that the proposed technique significantly outperforms FHMM and its memoryless counterpart, i.e., vector quantization (VQ)-based SCSS.

翻译:我们提出了一个新的概率图形模型(FHMM),该模型将元素隐藏的Markov模型(FHMM)概括为单一通道语音分离问题(SCSS),我们希望将两种语音信号(X(t)美元)和美元(t)从混合的单录入美元Y(t)=X(t)+V(t)美元,使用经过培训的发言者语音信号模型进行。当前技术假设分离模型的培训和测试阶段所使用的数据具有同样的响度。在本文中,我们采用GFHMMM,获得经过调整的FHMM,将SSCS扩大到一般情况,即:美元(t)=g_X(t)+g_V(t)美元)和美元(t),而美元是其混合物的单录入率为1美元(g_x美元)和美元+V(t),这是未知的增益系数。 GFHMMMM分别由两个独立状态和一个隐藏的节点组成,分别是模型光模式和增益的基础。我们采用了一种新型的推论方法,使用维特比算算法和四重整,以最小的计算中最小的计算中,将VHMMMMMMMMMMMU-Q。实验性结果显示从15-Q-Q-Q-Q-Q的模型的模型的模型的模型的模型和模型的模型的模型的模型的模型,与18级的模型的模型的模型的模型的模型的模型的模型的模型和模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型的模型,显示。