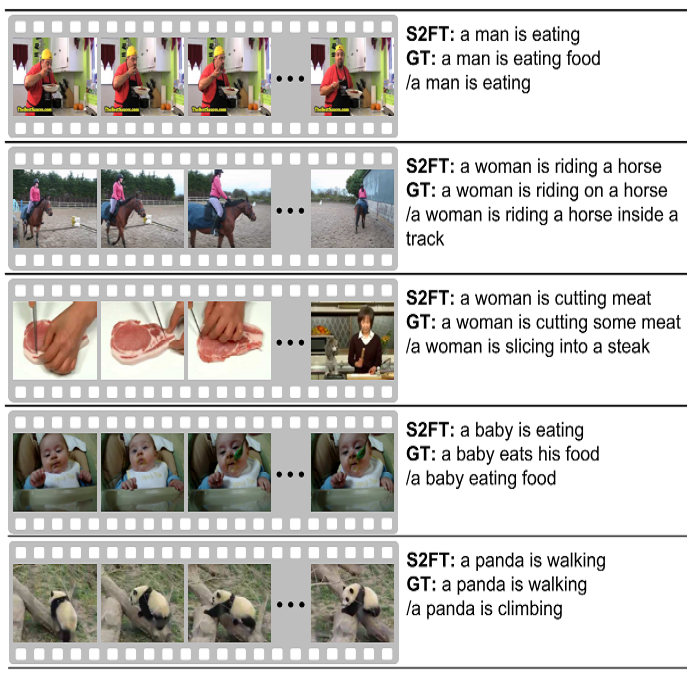

Most of the existing works on human activity analysis focus on recognition or early recognition of the activity labels from complete or partial observations. Similarly, existing video captioning approaches focus on the observed events in videos. Predicting the labels and the captions of future activities where no frames of the predicted activities have been observed is a challenging problem, with important applications that require anticipatory response. In this work, we propose a system that can infer the labels and the captions of a sequence of future activities. Our proposed network for label prediction of a future activity sequence is similar to a hybrid Siamese network with three branches where the first branch takes visual features from the objects present in the scene, the second branch takes observed activity features and the third branch captures the last observed activity features. The predicted labels and the observed scene context are then mapped to meaningful captions using a sequence-to-sequence learning based method. Experiments on three challenging activity analysis datasets and a video description dataset demonstrate that both our label prediction framework and captioning framework outperforms the state-of-the-arts.

翻译:人类活动分析的现有大部分工作侧重于完全或部分观测活动标签的识别或早期识别。同样,现有的视频字幕方法侧重于视频中观察到的事件。预测未观察到预计活动框架的未来活动的标签和说明是一个具有挑战性的问题,重要的应用需要预先作出反应。在这项工作中,我们建议了一个系统,可以推断标签和未来活动顺序的字幕。我们提出的未来活动序列的标签预测网络类似于一个混合的Siamese网络,该网络有三个分支,第一个分支从现场的物体中取得视觉特征,第二个分支则观测活动特征,第三个分支捕捉最后观察到的活动特征。预测标签和所观察到的场景背景随后用一个基于顺序到顺序的学习方法绘制为有意义的说明。关于三个具有挑战性的活动分析数据集的实验和一个视频描述数据集表明,我们的标签预测框架和说明框架都超越了状态。