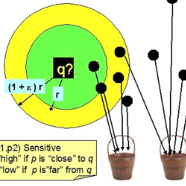

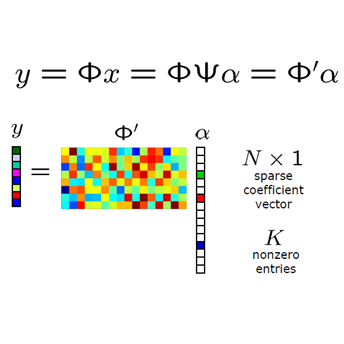

We demonstrate the first possibility of a sub-linear memory sketch for solving the approximate near-neighbor search problem. In particular, we develop an online sketching algorithm that can compress $N$ vectors into a tiny sketch consisting of small arrays of counters whose size scales as $O(N^{b}\log^2{N})$, where $b < 1$ depending on the stability of the near-neighbor search. This sketch is sufficient to identify the top-$v$ near-neighbors with high probability. To the best of our knowledge, this is the first near-neighbor search algorithm that breaks the linear memory ($O(N)$) barrier. We achieve sub-linear memory by combining advances in locality sensitive hashing (LSH) based estimation, especially the recently-published ACE algorithm, with compressed sensing and heavy hitter techniques. We provide strong theoretical guarantees; in particular, our analysis sheds new light on the memory-accuracy tradeoff in the near-neighbor search setting and the role of sparsity in compressed sensing, which could be of independent interest. We rigorously evaluate our framework, which we call RACE (Repeated ACE) data structures on a friend recommendation task on the Google plus graph with more than 100,000 high-dimensional vectors. RACE provides compression that is orders of magnitude better than the random projection based alternative, which is unsurprising given the theoretical advantage. We anticipate that RACE will enable both new theoretical perspectives on near-neighbor search and new methodologies for applications like high-speed data mining, internet-of-things (IoT), and beyond.

翻译:我们展示了解决近邻搜索近似问题的亚线内存草图的第一可能性。 特别是, 我们开发了一个在线草图算法, 可以将一美元向量压缩成一小阵小的草图, 其规模大小为$O( N ⁇ b ⁇ log ⁇ 2{N}), 其大小为 $b < 1美元, 取决于近邻搜索的稳定性。 这个草图足以确定近邻搜索量高的顶端- 5美元。 据我们所知, 这是第一种近邻搜索量的首个近邻搜索算法, 打破了直线内存( O(N)$) 屏障。 我们实现了亚线内线性记忆, 将地方敏感散列( LSH) 的进展合并起来, 特别是最近公布的ACE 算法, 其压缩感应视近邻搜索量的稳定性。 我们的分析为近邻搜索量中的记忆- 准确性交易量交易量交易量的准确性交易量, 以及精确性测测算的功能作用在近近近端的网络内线上( ), 亚内线性测值值值值值值值值值值的亚内, 数据将比亚历值高。