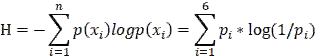

Convolution Neural Networks (CNN) have recently achieved state-of-the art performance on handwritten Chinese character recognition (HCCR). However, most of CNN models employ the SoftMax activation function and minimize cross entropy loss, which may cause loss of inter-class information. To cope with this problem, we propose to combine cross entropy with similarity ranking function and use it as loss function. The experiments results show that the combination loss functions produce higher accuracy in HCCR. This report briefly reviews cross entropy loss function, a typical similarity ranking function: Euclidean distance, and also propose a new similarity ranking function: Average variance similarity. Experiments are done to compare the performances of a CNN model with three different loss functions. In the end, SoftMax cross entropy with Average variance similarity produce the highest accuracy on handwritten Chinese characters recognition.

翻译:近些年来,中国手写字符识别(HCHCR)的神经神经网络(CNN)取得了最新艺术性能,然而,有线电视新闻网的多数模型都使用SoftMax激活功能,并尽量减少可能造成阶级间信息丢失的交叉星体损失。为了解决这一问题,我们提议将交叉星体与相似的星体排序功能结合起来,并将其作为损失函数。实验结果显示,在HCCR中,合并损失功能产生更高的准确性。本报告简要回顾了跨星体损失函数,这是典型的相似性排序函数:欧洲大陆距离,还提出了一个新的类似性排序函数:平均差异相似性。我们进行了实验,将CNN模型的性能与三种不同的损失函数进行比较。在结尾,SoftMax交叉星体与平均差异性能生成中国手写字符识别的最高精度。