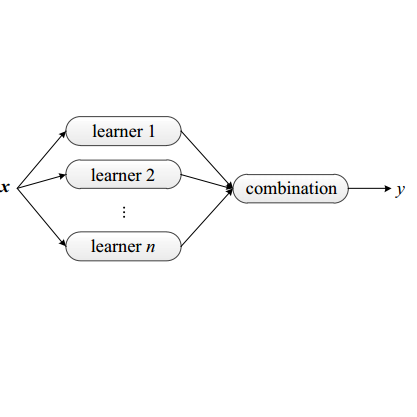

With a growing demand for adopting ML models for a varietyof application services, it is vital that the frameworks servingthese models are capable of delivering highly accurate predic-tions with minimal latency along with reduced deploymentcosts in a public cloud environment. Despite high latency,prior works in this domain are crucially limited by the accu-racy offered by individual models. Intuitively, model ensem-bling can address the accuracy gap by intelligently combiningdifferent models in parallel. However, selecting the appro-priate models dynamically at runtime to meet the desiredaccuracy with low latency at minimal deployment cost is anontrivial problem. Towards this, we proposeCocktail, a costeffective ensembling-based model serving framework.Cock-tailcomprises of two key components: (i) a dynamic modelselection framework, which reduces the number of modelsin the ensemble, while satisfying the accuracy and latencyrequirements; (ii) an adaptive resource management (RM)framework that employs a distributed proactive autoscalingpolicy combined with importance sampling, to efficiently allo-cate resources for the models. The RM framework leveragestransient virtual machine (VM) instances to reduce the de-ployment cost in a public cloud. A prototype implementationofCocktailon the AWS EC2 platform and exhaustive evalua-tions using a variety of workloads demonstrate thatCocktailcan reduce deployment cost by 1.45x, while providing 2xreduction in latency and satisfying the target accuracy for upto 96% of the requests, when compared to state-of-the-artmodel-serving frameworks.

翻译:由于在各种应用服务中日益需要采用 ML 模型,因此至关重要的是,为这些模型服务的框架必须能够在公共云层环境中提供高度准确的预产值,且使用最小的部署成本,同时降低部署成本,同时降低部署成本。为此,我们提议Cocktail,一个具有成本效益的基于组合的模型服务框架。Cock-tailment有两个关键组成部分:(一) 动态模型选择框架,它能够减少组合中模型的数量,同时满足准确性和一致性要求的模型;(二) 适应性资源管理(RM) 使用分布式的自动递增政策,同时降低成本,同时使用快速的RM-M-RV平台。