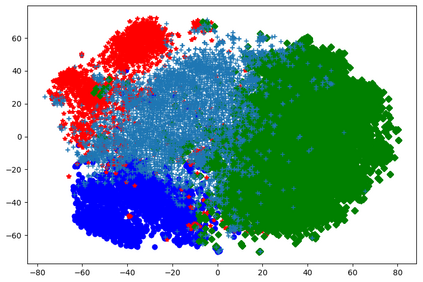

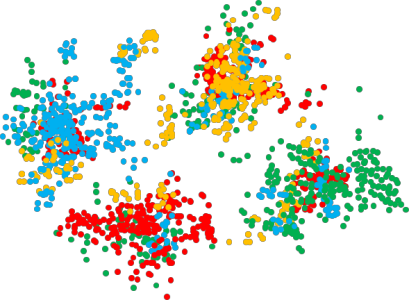

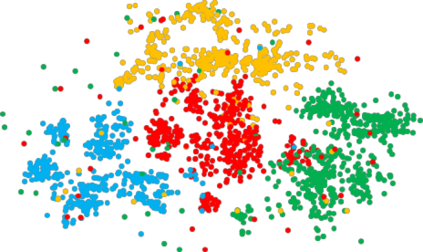

Context-Aware Emotion Recognition (CAER) is a crucial and challenging task that aims to perceive the emotional states of the target person with contextual information. Recent approaches invariably focus on designing sophisticated architectures or mechanisms to extract seemingly meaningful representations from subjects and contexts. However, a long-overlooked issue is that a context bias in existing datasets leads to a significantly unbalanced distribution of emotional states among different context scenarios. Concretely, the harmful bias is a confounder that misleads existing models to learn spurious correlations based on conventional likelihood estimation, significantly limiting the models' performance. To tackle the issue, this paper provides a causality-based perspective to disentangle the models from the impact of such bias, and formulate the causalities among variables in the CAER task via a tailored causal graph. Then, we propose a Contextual Causal Intervention Module (CCIM) based on the backdoor adjustment to de-confound the confounder and exploit the true causal effect for model training. CCIM is plug-in and model-agnostic, which improves diverse state-of-the-art approaches by considerable margins. Extensive experiments on three benchmark datasets demonstrate the effectiveness of our CCIM and the significance of causal insight.

翻译:上下文无关情感识别

上下文感知情感识别是一个重要而具有挑战性的任务,目标是识别目标人物的情绪状态并考虑到上下文信息。最近的方法普遍关注于设计复杂的架构或机制,从主题和语境中提取看似有意义的表示。然而,一个长期被忽视的问题是,现有数据集中的上下文偏差导致不同上下文情境中的情绪状态分布极度不平衡。具体来说,这种有害偏差是一种混淆因素,使得现有模型基于传统的似然估计学习到错误的关联,极大地限制了模型的性能。为了解决这个问题,本文提供了一个基于因果关系的视角,通过量身定制的因果图来区分模型与这种偏见的影响,并在因果图上制定CAER任务中变量之间的因果关系。然后,我们提出了一个基于反向通路调整的上下文因果干预模块(CCIM)来消除混淆因素,并利用真正的因果效果进行模型训练。CCIM是可插拔的且模型无关的,可以显著提高各种最先进方法的性能。 在三个基准数据集上进行的广泛实验表明了我们的CCIM的有效性和因果洞察力的重要性。