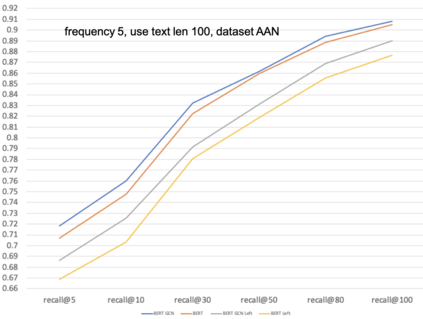

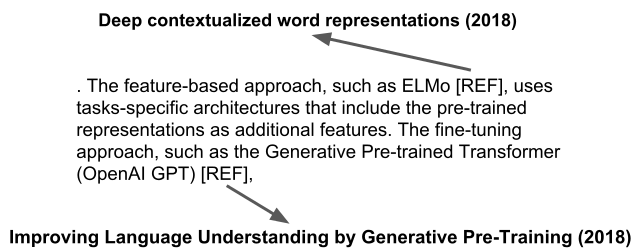

With the tremendous growth in the number of scientific papers being published, searching for references while writing a scientific paper is a time-consuming process. A technique that could add a reference citation at the appropriate place in a sentence will be beneficial. In this perspective, context-aware citation recommendation has been researched upon for around two decades. Many researchers have utilized the text data called the context sentence, which surrounds the citation tag, and the metadata of the target paper to find the appropriate cited research. However, the lack of well-organized benchmarking datasets and no model that can attain high performance has made the research difficult. In this paper, we propose a deep learning based model and well-organized dataset for context-aware paper citation recommendation. Our model comprises a document encoder and a context encoder, which uses Graph Convolutional Networks (GCN) layer and Bidirectional Encoder Representations from Transformers (BERT), which is a pre-trained model of textual data. By modifying the related PeerRead dataset, we propose a new dataset called FullTextPeerRead containing context sentences to cited references and paper metadata. To the best of our knowledge, This dataset is the first well-organized dataset for context-aware paper recommendation. The results indicate that the proposed model with the proposed datasets can attain state-of-the-art performance and achieve a more than 28% improvement in mean average precision (MAP) and recall@k.

翻译:随着发表科学论文数量的巨大增长,在撰写科学论文时寻找参考文件是一个耗时的过程。一种可以在一个句子的适当位置添加参考引用的技巧将是有益的。从这个角度,对背景认知引用建议进行了近20年的研究。许多研究人员利用称为背景句的文本数据,围绕引用标记,以及目标文件的元数据寻找适当的引用研究。然而,缺乏组织完善的基准基准数据集和没有能够取得高性能的模型,使得研究变得很困难。在本文中,我们提出一个深层次学习的模型和结构完善的数据集,用于背景认知的论文引用建议。我们的模型包括一个文件编码器和上下文编码器,它使用图动网络(GCN)层和来自变换器(BERT)的双向编码显示,这是经过预先培训的文本数据模型模型。通过修改,我们提出了一个新的数据集,名为“全流PeerRead”,其中含有背景说明背景引用的参考文件和纸质元数据。 最佳的拟议数据显示的是“组织化”数据,最优的模型和最精确的版本,可以显示的是“纸质”数据。