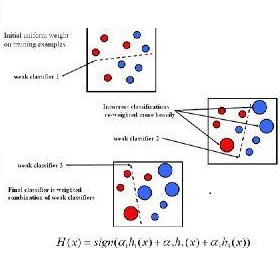

The AdaBoost algorithm has the superiority of resisting overfitting. Understanding the mysteries of this phenomena is a very fascinating fundamental theoretical problem. Many studies are devoted to explaining it from statistical view and margin theory. In this paper, we illustrate it from feature learning viewpoint, and propose the AdaBoost+SVM algorithm, which can explain the resistant to overfitting of AdaBoost directly and easily to understand. Firstly, we adopt the AdaBoost algorithm to learn the base classifiers. Then, instead of directly weighted combination the base classifiers, we regard them as features and input them to SVM classifier. With this, the new coefficient and bias can be obtained, which can be used to construct the final classifier. We explain the rationality of this and illustrate the theorem that when the dimension of these features increases, the performance of SVM would not be worse, which can explain the resistant to overfitting of AdaBoost.

翻译:AdaBoost 算法具有抵制过度的优越性。 了解这个现象的奥秘是一个非常引人入胜的基本理论问题。 许多研究都致力于从统计观点和边际理论来解释它。 在本文中, 我们从特征学习的角度来解释它, 并提议 AdaBoost+SVM 算法, 它可以解释对AdaBoost 直接和容易理解的过度适应的阻力。 首先, 我们采用 AdaBoost 算法来学习基础分类器。 然后, 我们不直接将基础分类器组合起来, 而是把它们视为特征, 并将其输入 SVM 分类器。 有了这个方法, 就可以获得新的系数和偏差, 用来构建最终分类器。 我们解释了这个参数的合理性, 并说明了当这些特性的维度增加时, SVM 的性能不会更糟, 这可以解释对过分适应 AdaBoost 的抗力。