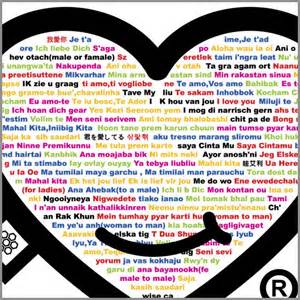

A key solution to visual question answering (VQA) exists in how to fuse visual and language features extracted from an input image and question. We show that an attention mechanism that enables dense, bi-directional interactions between the two modalities contributes to boost accuracy of prediction of answers. Specifically, we present a simple architecture that is fully symmetric between visual and language representations, in which each question word attends on image regions and each image region attends on question words. It can be stacked to form a hierarchy for multi-step interactions between an image-question pair. We show through experiments that the proposed architecture achieves a new state-of-the-art on VQA and VQA 2.0 despite its small size. We also present qualitative evaluation, demonstrating how the proposed attention mechanism can generate reasonable attention maps on images and questions, which leads to the correct answer prediction.

翻译:视觉问题解答(VQA)的关键解决办法在于如何结合从输入图像和问题中提取的视觉和语言特征。我们表明,一种能使两种模式之间产生密集、双向互动的注意机制有助于提高预测答案的准确性。具体地说,我们提出了一个在视觉和语言表达方式之间完全对称的简单结构,其中每个问题词都出现在图像区域,每个图像区域都出现在问题单词上。它可以堆叠成一个等级,以形成图像-问题对子之间多步互动的层次。我们通过实验显示,拟议的结构在VQA和VQA 2.0上取得了新的最新艺术,尽管规模较小。我们还提出定性评价,表明拟议的注意机制如何产生关于图像和问题的合理关注地图,从而导致正确的答案预测。