BERT/Transformer/迁移学习NLP资源大列表

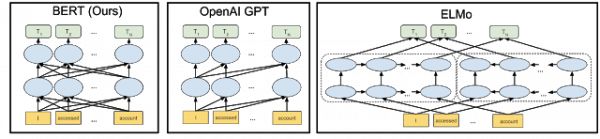

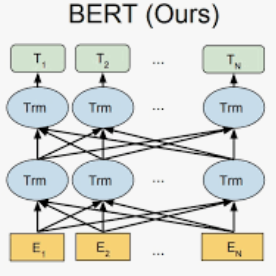

【导读】cedrickchee维护这个项目包含用于自然语言处理(NLP)的大型机器(深度)学习资源,重点关注转换器(BERT)的双向编码器表示、注意机制、转换器架构/网络和NLP中的传输学习。

https://github.com/cedrickchee/awesome-bert-nlp

Papers

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding by Jacob Devlin, Ming-Wei Chang, Kenton Lee and Kristina Toutanova.

Transformer-XL: Attentive Language Models Beyond a Fixed-Length Context by Zihang Dai, Zhilin Yang, Yiming Yang, William W. Cohen, Jaime Carbonell, Quoc V. Le and Ruslan Salakhutdinov.

Uses smart caching to improve the learning of long-term dependency in Transformer. Key results: state-of-art on 5 language modeling benchmarks, including ppl of 21.8 on One Billion Word (LM1B) and 0.99 on enwiki8. The authors claim that the method is more flexible, faster during evaluation (1874 times speedup), generalizes well on small datasets, and is effective at modeling short and long sequences.

Conditional BERT Contextual Augmentation by Xing Wu, Shangwen Lv, Liangjun Zang, Jizhong Han and Songlin Hu.

SDNet: Contextualized Attention-based Deep Network for Conversational Question Answering by Chenguang Zhu, Michael Zeng and Xuedong Huang.

Language Models are Unsupervised Multitask Learners by Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei and Ilya Sutskever.

The Evolved Transformer by David R. So, Chen Liang and Quoc V. Le.

They used architecture search to improve Transformer architecture. Key is to use evolution and seed initial population with Transformer itself. The architecture is better and more efficient, especially for small size models.

Articles

BERT and Transformer

Open Sourcing BERT: State-of-the-Art Pre-training for Natural Language Processing from Google AI.

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning).

Dissecting BERT by Miguel Romero and Francisco Ingham - Understand BERT in depth with an intuitive, straightforward explanation of the relevant concepts.

A Light Introduction to Transformer-XL.

Generalized Language Models by Lilian Weng, Research Scientist at OpenAI.

Attention Concept

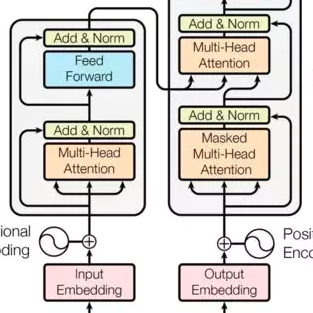

The Annotated Transformer by Harvard NLP Group - Further reading to understand the "Attention is all you need" paper.

Attention? Attention! - Attention guide by Lilian Weng from OpenAI.

Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models With Attention) by Jay Alammar, an Instructor from Udacity ML Engineer Nanodegree.

Transformer Architecture

The Transformer blog post.

The Illustrated Transformer by Jay Alammar, an Instructor from Udacity ML Engineer Nanodegree.

Watch Łukasz Kaiser’s talk walking through the model and its details.

Transformer-XL: Unleashing the Potential of Attention Models by Google Brain.

Generative Modeling with Sparse Transformers by OpenAI - an algorithmic improvement of the attention mechanism to extract patterns from sequences 30x longer than possible previously.

OpenAI Generative Pre-Training Transformer (GPT) and GPT-2

Better Language Models and Their Implications.

Improving Language Understanding with Unsupervised Learning - this is an overview of the original GPT model.

🦄 How to build a State-of-the-Art Conversational AI with Transfer Learning by Hugging Face.

Additional Reading

How to Build OpenAI's GPT-2: "The AI That's Too Dangerous to Release".

OpenAI’s GPT2 - Food to Media hype or Wake Up Call?

Official Implementations

google-research/bert - TensorFlow code and pre-trained models for BERT.

Other Implementations

PyTorch

huggingface/pytorch-pretrained-BERT - A PyTorch implementation of Google AI's BERT model with script to load Google's pre-trained models by Hugging Face.

codertimo/BERT-pytorch - Google AI 2018 BERT pytorch implementation.

innodatalabs/tbert - PyTorch port of BERT ML model.

kimiyoung/transformer-xl - Code repository associated with the Transformer-XL paper.

dreamgonfly/BERT-pytorch - PyTorch implementation of BERT in "BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding".

dhlee347/pytorchic-bert - Pytorch implementation of Google BERT

Keras

Separius/BERT-keras - Keras implementation of BERT with pre-trained weights.

CyberZHG/keras-bert - Implementation of BERT that could load official pre-trained models for feature extraction and prediction.

TensorFlow

guotong1988/BERT-tensorflow - BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.

kimiyoung/transformer-xl - Code repository associated with the Transformer-XL paper.

Chainer

soskek/bert-chainer - Chainer implementation of "BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding".

-END-

专 · 知

专知,专业可信的人工智能知识分发,让认知协作更快更好!欢迎登录www.zhuanzhi.ai,注册登录专知,获取更多AI知识资料!

欢迎微信扫一扫加入专知人工智能知识星球群,获取最新AI专业干货知识教程视频资料和与专家交流咨询!

请加专知小助手微信(扫一扫如下二维码添加),加入专知人工智能主题群,咨询技术商务合作~

专知《深度学习:算法到实战》课程全部完成!550+位同学在学习,现在报名,限时优惠!网易云课堂人工智能畅销榜首位!

点击“阅读原文”,了解报名专知《深度学习:算法到实战》课程