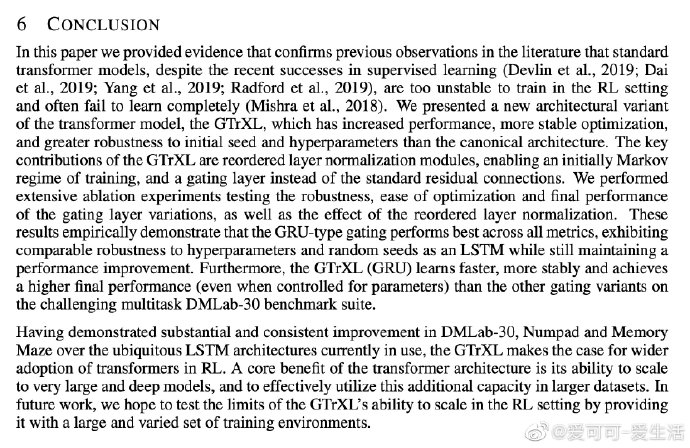

*《Stabilizing Transformers for Reinforcement Learning》E Parisotto, H. F Song, J W. Rae, R Pascanu, C Gulcehre, S M. Jayakumar, M Jaderberg, R L Kaufman, A Clark, S Noury, M M. Botvinick, N Heess, R Hadsell [DeepMind] (2019)

成为VIP会员查看完整内容

相关内容

强化学习(RL)是机器学习的一个领域,与软件代理应如何在环境中采取行动以最大化累积奖励的概念有关。除了监督学习和非监督学习外,强化学习是三种基本的机器学习范式之一。

强化学习与监督学习的不同之处在于,不需要呈现带标签的输入/输出对,也不需要显式纠正次优动作。相反,重点是在探索(未知领域)和利用(当前知识)之间找到平衡。

该环境通常以马尔可夫决策过程(MDP)的形式陈述,因为针对这种情况的许多强化学习算法都使用动态编程技术。经典动态规划方法和强化学习算法之间的主要区别在于,后者不假设MDP的确切数学模型,并且针对无法采用精确方法的大型MDP。

专知会员服务

41+阅读 · 2020年4月11日

专知会员服务

48+阅读 · 2019年12月24日

Arxiv

4+阅读 · 2018年1月29日