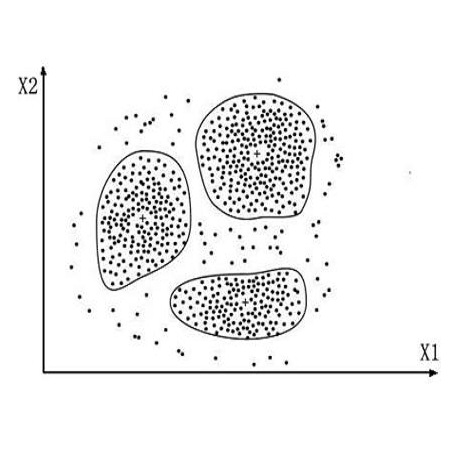

Internet memes are characterised by the interspersing of text amongst visual elements. State-of-the-art multimodal meme classifiers do not account for the relative positions of these elements across the two modalities, despite the latent meaning associated with where text and visual elements are placed. Against two meme sentiment classification datasets, we systematically show performance gains from incorporating the spatial position of visual objects, faces, and text clusters extracted from memes. In addition, we also present facial embedding as an impactful enhancement to image representation in a multimodal meme classifier. Finally, we show that incorporating this spatial information allows our fully automated approaches to outperform their corresponding baselines that rely on additional human validation of OCR-extracted text.

翻译:互联网模式的特征是视觉要素之间的文字相互渗透。 最先进的多式多式中位分类器没有说明这两个模式中这些元素的相对位置,尽管这些元素与文本和视觉要素的位置有着潜在的含义。 在两个微调分类数据集中,我们系统地显示将视觉物体、面部和从Memes提取的文字集群的空间位置纳入其中的性能收益。 此外,我们还将面部嵌入作为多式中位分类器中图像表现的影响力增强。 最后,我们表明,纳入这一空间信息可以使我们完全自动化的方法超过相应的基线,而这些基线依赖于对OCR提取的文字的额外人力验证。</s>