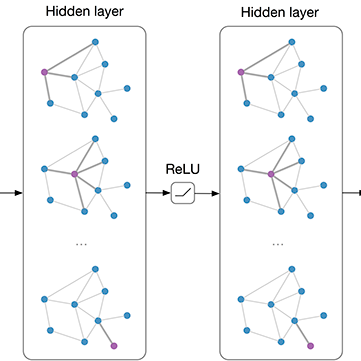

Graph convolution operator of the GCN model is originally motivated from a localized first-order approximation of spectral graph convolutions. This work stands on a different view; establishing a \textit{mathematical connection between graph convolution and graph-regularized PCA} (GPCA). Based on this connection, GCN architecture, shaped by stacking graph convolution layers, shares a close relationship with stacking GPCA. We empirically demonstrate that the \textit{unsupervised} embeddings by GPCA paired with a 1- or 2-layer MLP achieves similar or even better performance than GCN on semi-supervised node classification tasks across five datasets including Open Graph Benchmark \footnote{\url{https://ogb.stanford.edu/}}. This suggests that the prowess of GCN is driven by graph based regularization. In addition, we extend GPCA to the (semi-)supervised setting and show that it is equivalent to GPCA on a graph extended with "ghost" edges between nodes of the same label. Finally, we capitalize on the discovered relationship to design an effective initialization strategy based on stacking GPCA, enabling GCN to converge faster and achieve robust performance at large number of layers. Notably, the proposed initialization is general-purpose and applies to other GNNs.

翻译:GCN 模型的图形组合操作器最初的驱动力来自光谱图组合的本地一阶近似光谱图组合。 这项工作持不同观点; 在图形组合和图形正规化的CPA}( GPCA) 之间建立了 textit{ 数学联系。 基于此连接, GCN 结构由堆叠图组合层形成, 与堆叠的GPCA 有着密切的关系。 我们从经验上证明, GPCA 嵌入的1级或2级MLP 与GPCA 相配的匹配,在半监督的节点上达到类似甚至更好的性能; 在五个数据集中, 包括 Open Grig 基准\ footte \ url{https://ogb.stanford.edu/ ⁇ 。 这表明GCN 的精度由基于图形的堆叠合关系驱动的。 此外, 我们将GPCA 扩展至( 缩略) 监督的设置, 并显示它等同于GPCA 扩展的图像GPC 。 在“ QPCD ” 介点之间“ 边缘” 边端端端端端, 实现GNPNPC 快速化的大型的大型的大型设计, 最后, 我们利用了GNPC 快速的高级的大型设计到 GNPOL 级的大型设计, 升级的大型设计到 GNPOL 级的大型的大型的大型的大型的大型设计。