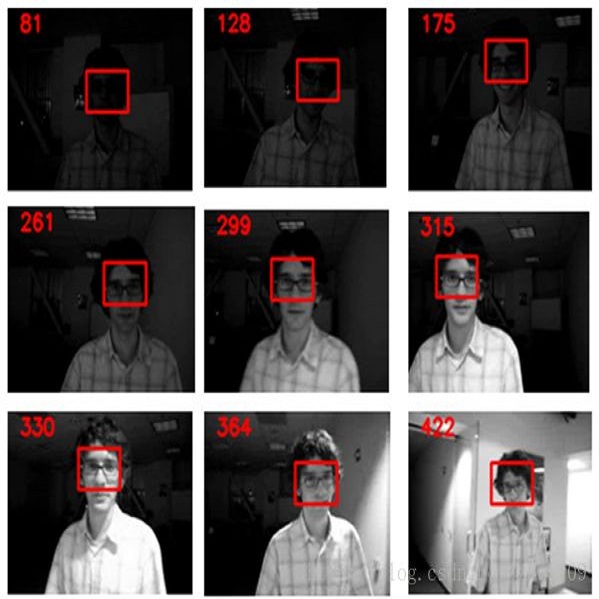

In this paper we propose an effective non-rigid object tracking method based on spatial-temporal consistent saliency detection. In contrast to most existing trackers that use a bounding box to specify the tracked target, the proposed method can extract the accurate regions of the target as tracking output, which achieves better description of the non-rigid objects while reduces background pollution to the target model. Furthermore, our model has several unique features. First, a tailored deep fully convolutional neural network (TFCN) is developed to model the local saliency prior for a given image region, which not only provides the pixel-wise outputs but also integrates the semantic information. Second, a multi-scale multi-region mechanism is proposed to generate local region saliency maps that effectively consider visual perceptions with different spatial layouts and scale variations. Subsequently, these saliency maps are fused via a weighted entropy method, resulting in a final discriminative saliency map. Finally, we present a non-rigid object tracking algorithm based on the proposed saliency detection method by utilizing a spatial-temporal consistent saliency map (STCSM) model to conduct target-background classification and using a simple fine-tuning scheme for online updating. Numerous experimental results demonstrate that the proposed algorithm achieves competitive performance in comparison with state-of-the-art methods for both saliency detection and visual tracking, especially outperforming other related trackers on the non-rigid object tracking datasets.

翻译:在本文中,我们提出了一个基于时空一致特征探测的有效非硬性物体跟踪方法。与大多数使用捆绑框来指定跟踪目标的多数现有跟踪者相比,拟议方法可以将目标的准确区域作为跟踪输出,从而更好地描述非硬性物体,同时将背景污染降低到目标模型。此外,我们的模型有几个独特的特点。首先,开发了一个量身定制的深深层完全共振神经神经网络(TFCN),以模拟特定图像区域之前的当地显著值,不仅提供等离子输出,而且整合语义信息。第二,提议建立一个多尺度多区域机制,以生成能够有效考虑不同空间布局和规模变化的视觉观点的局部目标区域显著区域图。随后,这些突出的地图通过加权增缩法结合,从而形成最后的区别突出度分布图。最后,我们根据拟议的突出度检测方法提出了一种非硬性对象跟踪算法,它不仅提供等离子输出,而且整合了语义信息。第二,提出了多尺度多区域机制机制,以生成地方特征地图,有效地考虑不同空间定位的视觉跟踪模型,并特别用于进行试验性跟踪,从而在进行多层次跟踪,从而在进行在线测试。