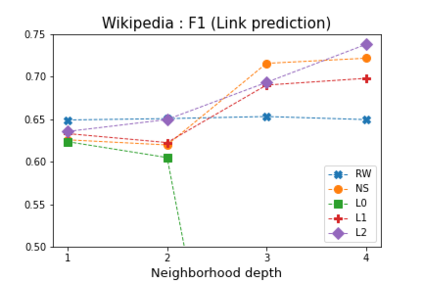

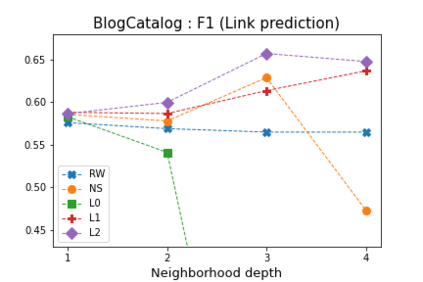

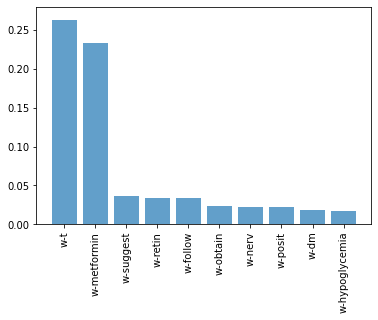

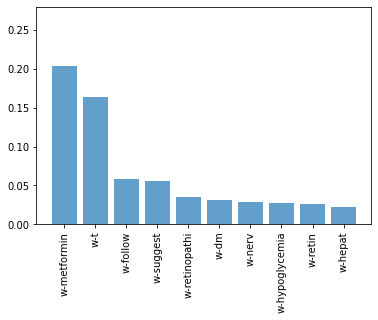

Representation learning for graphs enables the application of standard machine learning algorithms and data analysis tools to graph data. Replacing discrete unordered objects such as graph nodes by real-valued vectors is at the heart of many approaches to learning from graph data. Such vector representations, or embeddings, capture the discrete relationships in the original data by representing nodes as vectors in a high-dimensional space. In most applications graphs model the relationship between real-life objects and often nodes contain valuable meta-information about the original objects. While being a powerful machine learning tool, embeddings are not able to preserve such node attributes. We address this shortcoming and consider the problem of learning discrete node embeddings such that the coordinates of the node vector representations are graph nodes. This opens the door to designing interpretable machine learning algorithms for graphs as all attributes originally present in the nodes are preserved. We present a framework for coordinated local graph neighborhood sampling (COLOGNE) such that each node is represented by a fixed number of graph nodes, together with their attributes. Individual samples are coordinated and they preserve the similarity between node neighborhoods. We consider different notions of similarity for which we design scalable algorithms. We show theoretical results for all proposed algorithms. Experiments on benchmark graphs evaluate the quality of the designed embeddings and demonstrate how the proposed embeddings can be used in training interpretable machine learning algorithms for graph data.

翻译:图形的代表学习使标准机器学习算法和数据分析工具能够应用标准机器学习算法和数据分析工具来图解数据。 替换离散的非顺序对象, 如图形节点, 以真实值矢量取代图形节点, 是从图形数据中学习的许多方法的核心。 这种矢量表示或嵌入, 通过在高维空间中将节点作为矢量代表来捕捉原始数据中的离散关系。 在大多数应用图中, 真实生命对象和经常节点之间的关系模型都包含关于原始对象的宝贵元信息。 嵌入是一个强大的机器学习工具, 无法保存这样的节点属性。 我们处理这一短处, 并且考虑学习离散节点嵌嵌嵌的问题, 这样, 使节点矢的矢量代表的坐标是图形节点的图形节点。 这打开了为设计可解释的机器学习算法的大门, 因为节点中的所有属性都保留了。 我们提供了一个协调的本地图形邻域取样框架, 每个节点都有一个固定的图表节点及其属性。 单个的样本是协调的, 并且它们保存了离节点嵌嵌嵌嵌嵌嵌嵌的嵌 。 我们用了用来的模型的模型的缩缩缩算, 展示了我们用来显示了模型的排序。 我们用的模型的模型的模型的排序的排序。 我们为不同的计算。 实验式的模型的模型的模型的排序。