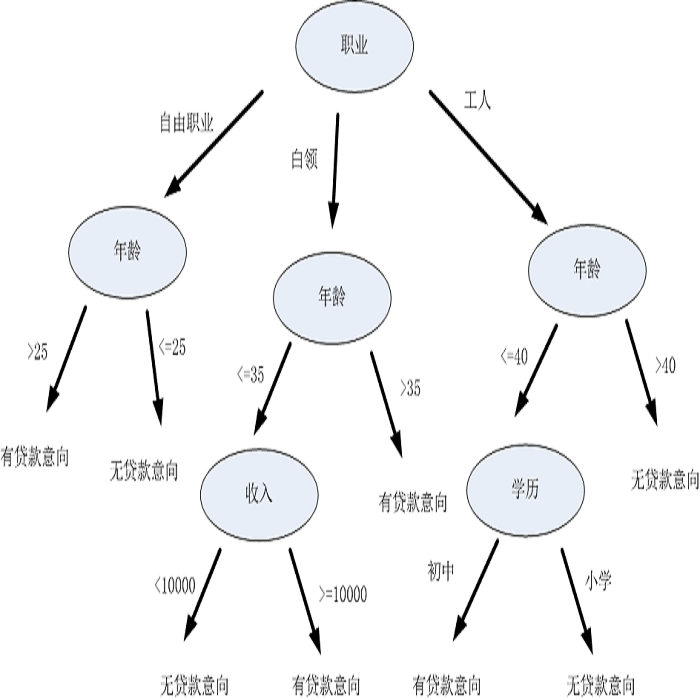

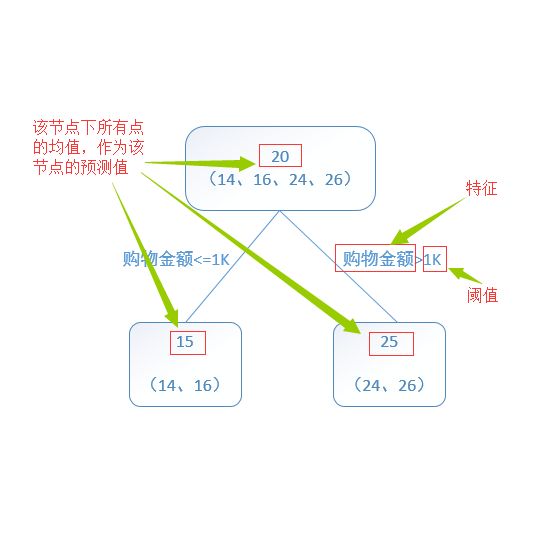

Federated learning (FL) is an emerging paradigm that enables multiple organizations to jointly train a model without revealing their private data to each other. This paper studies {\it vertical} federated learning, which tackles the scenarios where (i) collaborating organizations own data of the same set of users but with disjoint features, and (ii) only one organization holds the labels. We propose Pivot, a novel solution for privacy preserving vertical decision tree training and prediction, ensuring that no intermediate information is disclosed other than those the clients have agreed to release (i.e., the final tree model and the prediction output). Pivot does not rely on any trusted third party and provides protection against a semi-honest adversary that may compromise $m-1$ out of $m$ clients. We further identify two privacy leakages when the trained decision tree model is released in plaintext and propose an enhanced protocol to mitigate them. The proposed solution can also be extended to tree ensemble models, e.g., random forest (RF) and gradient boosting decision tree (GBDT) by treating single decision trees as building blocks. Theoretical and experimental analysis suggest that Pivot is efficient for the privacy achieved.

翻译:联邦学习(FL)是一个新兴的范例,它使多个组织能够联合培训一个模型,而不必相互透露其私人数据。 本文的研究是纵向的,是联合学习,它涉及:(一) 合作组织拥有同一组用户的数据,但具有脱节特征,以及(二) 只有一个组织持有标签。我们提议Pivot,这是保护纵向决策树培训和预测的隐私的新解决方案,确保除了客户同意释放的模型(即最终树模型和预测产出)外,没有披露任何中间信息。 Pivot并不依赖任何可信任的第三方,而是提供保护,防止半诚实对手在可能折损1百万美元客户中1美元的情况下出现。我们进一步确定在经过培训的决策树模型以简洁文字发布时有两种隐私渗漏,并提议加强协议来缓解这些渗漏。我们提出的解决方案还可以扩大到树群模型,例如随机森林和梯度增强决定树,通过将单项决定树作为建筑块来提高决定树的树。 理论和实验分析表明,Pivot对于实现隐私是有效的。