【泡泡汇总】CVPR2019 SLAM Paperlist

CVPR2019 六月在美国召开,我们对SLAM相关的会议论文进行了整理分类。

主要分为以下几类:

1.匹配

2.匹配-深度学习

3.三维重建

4.三维重建-深度学习

5.定位

6.定位-深度学习

7.跟踪

8.跟踪-深度学习

9.深度估计

10.深度估计-深度学习

11.标定-深度学习

12.目标检测

13.目标检测-深度学习

14.自动驾驶

15.其他

各类的论文如下:

匹配:

SDRSAC: Semidefinite-Based Randomized Approach for Robust Point Cloud Registration without Correspondences

NM-Net: Mining Reliable Neighbors for Robust Feature Correspondences

The Perfect Match: 3D Point Cloud Matching with Smoothed Densities

匹配-深度学习:

GA-Net: Guided Aggregation Net for End-to-end Stereo Matching

Guided Stereo Matching

Multi-Level Context Ultra-Aggregation for Stereo Matching

PointNetLK: Robust & Efficient Point Cloud Registration using PointNet

三维重建:

Coordinate-Free Carlsson-Weinshall Duality and Relative Multi-View Geometry

PlaneRCNN: 3D Plane Detection and Reconstruction from a Single View

Single-Image Piece-wise Planar 3D Reconstruction via Associative Embedding

GPSfM: Global Projective SFM Using Algebraic Constraints\\ on Multi-View Fundamental Matrices

Privacy Preserving Image-based Localization

Visual Localization by Learning Objects-of-Interest Dense Match Regression

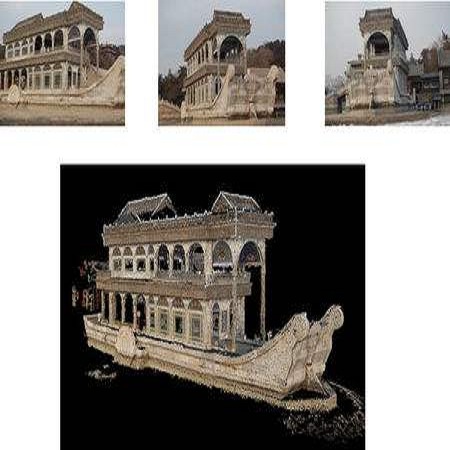

Robust Point Cloud Reconstruction of Large-Scale Outdoor Scenes

SceneCode: Monocular Dense Semantic Reconstruction using Learned Encoded Scene Representations

三维重建-深度学习:

Revealing Scenes by Inverting Structure from Motion Reconstructions

Deep Reinforcement Learning of Volume-guided Progressive View Inpainting for 3D Point Scene Completion from a Single Depth Image

What Do Single-view 3D Reconstruction Networks Learn?

Learning View Priors for Single-view 3D Reconstruction

定位:

PVNet: Pixel-wise Voting Network for 6DoF Pose Estimation

Hybrid Scene Compression for Visual Localization

The Alignment of the Spheres: Globally-Optimal Spherical Mixture Alignment for Camera Pose Estimation

定位-深度学习:

Normalized Object Coordinate Space for Category-Level 6D Object Pose and Size Estimation

Extreme Relative Pose Estimation for RGB-D Scans via Scene Completion

Understanding the Limitations of CNN-based Absolute Camera Pose Regression

DeepLiDAR: Deep Surface Normal Guided Depth Prediction for Outdoor Scene from Sparse LiDAR Data and Single Color Image

DenseFusion: 6D Object Pose Estimation by Iterative Dense Fusion

Segmentation-driven 6D Object Pose Estimation

PointFlowNet: Learning Representations for Rigid Motion Estimation from Point Clouds

From Coarse to Fine: Robust Hierarchical Localization at Large Scale

跟踪:

VITAMIN-E: VIsual Tracking And MappINg with Extremely Dense Feature Points

Motion estimation of non-holonomic ground vehicles from a single feature correspondence measured over n views

跟踪-深度学习:

Unsupervised Event-based Learning of Optical Flow, Depth, and Egomotion

SPLFlowNet: Sparse Permutohedral Lattice FlowNet for Scene Flow Estimation on Large-scale Point Clouds

SIGNet: Semantic Instance Aided Unsupervised 3D Geometry Perception

深度估计:

Recurrent MVSNet for High-resolution Multi-view Stereo Depth Inference

Learning Single-Image Depth from Videos using Quality Assessment Networks

Depth from a polarisation + RGB stereo pair

Monocular Depth Estimation Using Relative Depth Maps

Geometry-Aware Symmetric Domain Adaptation for Monocular Depth Estimation

CAM-Convs: Camera-Aware Multi-Scale Convolutions for Single-View Depth Prediction

深度估计-深度学习:

Recurrent Neural Network for (Un-)supervised Learning of Monocular Video Visual Odometry and Depth

Connecting the Dots: Learning Representations for Active Monocular Depth Estimation

Learning Non-Volumetric Depth Fusion using Successive Reprojections

Learning monocular depth estimation infusing traditional stereo knowledge

标定-深度学习:

Deep Single Image Camera Calibration with Radial Distortion

目标检测:

PointRCNN: 3D Object Proposal Generation and Detection from Point Cloud

目标检测-深度学习:

Deep Relational Reasoning Network for Monocular 3D Object Detection

ROI-10D: Monocular Lifting of 2D Detection to 6D Pose and Metric Shape

自动驾驶:

DrivingStereo: A Large-Scale Dataset for Stereo Matching in Autonomous Driving Scenarios

GS3D: An Efficient 3D Object Detection Framework for Autonomous Driving

ApolloCar3D: A Large 3D Car Instance Understanding Benchmark for Autonomous Driving

Stereo R-CNN based 3D Object Detection for Autonomous Driving

Pseudo-LiDAR from Visual Depth Estimation: Bridging the Gap in 3D Object Detection for Autonomous Driving

Rules of the Road: Predicting Driving Behavior with a Convolutional Model of Semantic Interactions

其他:

BAD SLAM: Bundle Adjusted Direct RGB-D SLAM

Modeling Local Geometric Structure of 3D Point Clouds using Geo-CNN

Noise-Aware Unsupervised Deep Lidar-Stereo Fusion

3D Motion Decomposition for RGBD Future Dynamic Scene Synthesis

RGBD Based Dimensional Decomposition Residual Network for 3D Semantic Scene Completion

D2-Net: A Trainable CNN for Joint Description and Detection of Local Features

LO-Net: Deep Real-time Lidar Odometry

Octree guided CNN with Spherical Kernels for 3D Point Clouds

DeepMapping: Unsupervised Map Estimation From Multiple Point Clouds

FlowNet3D: Learning Scene Flow in 3D Point Clouds

如有任何遗漏或错误,欢迎大家批评指正~

欢迎来到泡泡论坛,这里有大牛为你解答关于SLAM的任何疑惑。

有想问的问题,或者想刷帖回答问题,泡泡论坛欢迎你!

泡泡网站:www.paopaorobot.org

泡泡论坛:http://paopaorobot.org/bbs/

商业合作及转载请联系liufuqiang_robot@hotmail.com