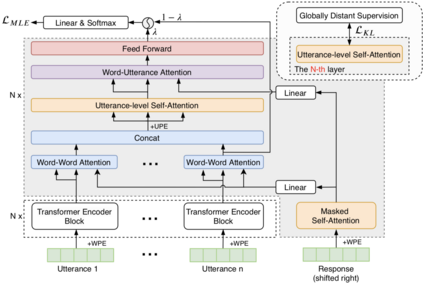

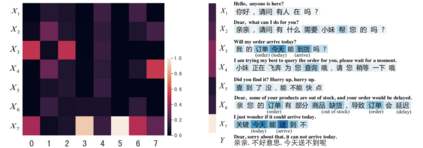

Open-domain multi-turn conversations mainly have three features, which are hierarchical semantic structure, redundant information, and long-term dependency. Grounded on these, selecting relevant context becomes a challenge step for multi-turn dialogue generation. However, existing methods cannot differentiate both useful words and utterances in long distances from a response. Besides, previous work just performs context selection based on a state in the decoder, which lacks a global guidance and could lead some focuses on irrelevant or unnecessary information. In this paper, we propose a novel model with hierarchical self-attention mechanism and distant supervision to not only detect relevant words and utterances in short and long distances, but also discern related information globally when decoding. Experimental results on two public datasets of both automatic and human evaluations show that our model significantly outperforms other baselines in terms of fluency, coherence, and informativeness.

翻译:开放式多方向对话主要有三个特征,即等级语义结构、冗余信息和长期依赖性。基于这些特征,选择相关背景成为多方向对话生成的一个挑战步骤。然而,现有方法无法在长距离内区分有用词和语句与响应。此外,先前的工作只是根据解码器中的一个状态进行背景选择,该状态缺乏全球指导,并可能导致某些关注不相关或不必要的信息。在本文中,我们提出了一个带有等级自留机制的新模式,以及远程监督,不仅在短距离和长距离内发现相关词和语句,而且在解码时也发现全球相关信息。 两种自动和人类评价的公开数据集的实验结果显示,我们的模型在流利、一致性和信息性方面大大超越了其他基线。