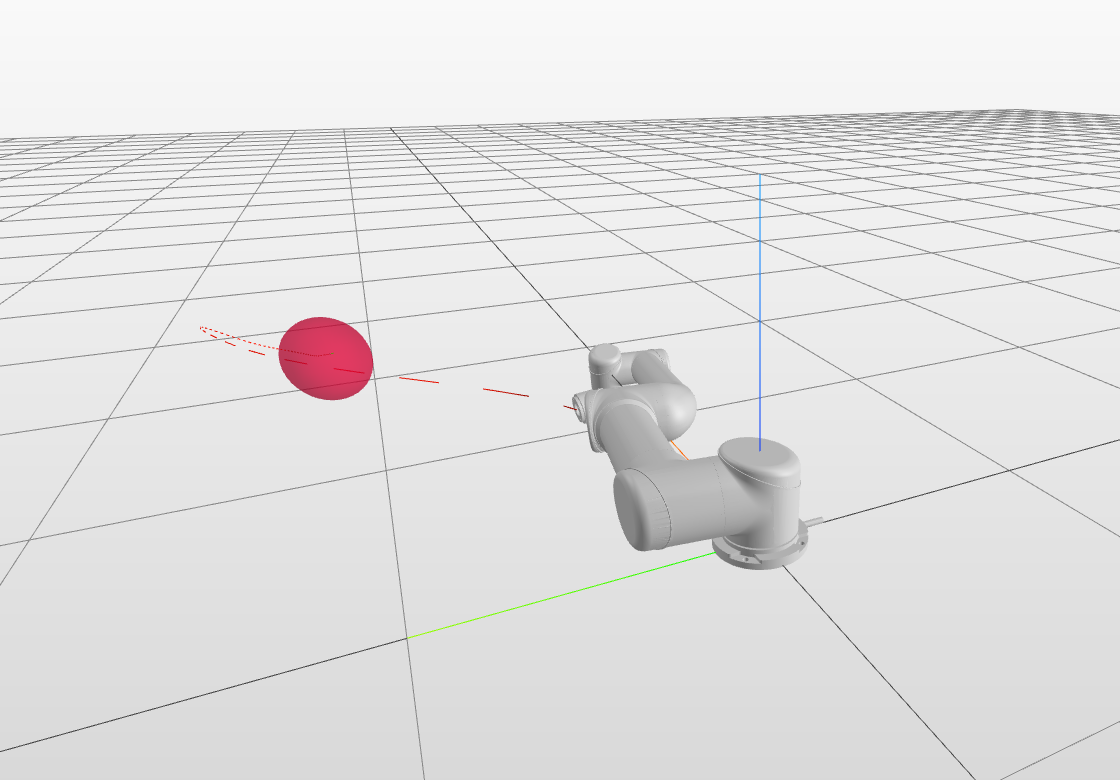

Reinforcement learning (RL) and trajectory optimization (TO) present strong complementary advantages. On one hand, RL approaches are able to learn global control policies directly from data, but generally require large sample sizes to properly converge towards feasible policies. On the other hand, TO methods are able to exploit gradient-based information extracted from simulators to quickly converge towards a locally optimal control trajectory which is only valid within the vicinity of the solution. Over the past decade, several approaches have aimed to adequately combine the two classes of methods in order to obtain the best of both worlds. Following on from this line of research, we propose several improvements on top of these approaches to learn global control policies quicker, notably by leveraging sensitivity information stemming from TO methods via Sobolev learning, and augmented Lagrangian techniques to enforce the consensus between TO and policy learning. We evaluate the benefits of these improvements on various classical tasks in robotics through comparison with existing approaches in the literature.

翻译:强化学习(RL)和轨迹优化(TO)具有巨大的互补优势。一方面,RL方法能够直接从数据中学习全球控制政策,但通常需要大量样本规模才能适当地趋向可行的政策。另一方面,方法能够利用模拟器提取的梯度信息,迅速走向地方最佳控制轨迹,该轨迹仅在解决方案附近有效。在过去10年中,若干方法旨在充分结合这两类方法,以便获得两个世界的最佳结果。从这一研究轨迹来看,我们建议对这些方法进行一些改进,以便更快地学习全球控制政策,特别是利用通过索博列夫学习的方法产生的敏感信息,并增强拉格朗加技术,以落实学习与政策之间的共识。我们通过比较文献中的现有方法,评估这些改进对机器人中各种传统任务的好处。