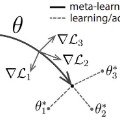

Continual learning studies agents that learn from streams of tasks without forgetting previous ones while adapting to new ones. Two recent continual-learning scenarios have opened new avenues of research. In meta-continual learning, the model is pre-trained to minimize catastrophic forgetting of previous tasks. In continual-meta learning, the aim is to train agents for faster remembering of previous tasks through adaptation. In their original formulations, both methods have limitations. We stand on their shoulders to propose a more general scenario, OSAKA, where an agent must quickly solve new (out-of-distribution) tasks, while also requiring fast remembering. We show that current continual learning, meta-learning, meta-continual learning, and continual-meta learning techniques fail in this new scenario. We propose Continual-MAML, an online extension of the popular MAML algorithm as a strong baseline for this scenario. We empirically show that Continual-MAML is better suited to the new scenario than the aforementioned methodologies, as well as standard continual learning and meta-learning approaches.

翻译:不断学习的代理机构从工作流中学习,同时不忘以前的任务,同时适应新的任务。最近两个连续学习的情景开辟了新的研究途径。在元连续学习中,模型经过预先训练,以尽量减少对以前任务的灾难性遗忘。在连续学习中,目标是训练代理机构通过适应更快地回忆以前的任务。在最初的配方中,两种方法都有局限性。我们站在他们的肩上,提出一个更一般的情景:OSAKA, 一个代理机构必须迅速解决新的(分配以外的)任务,同时需要快速记住。我们表明,在这种新情景中,目前的持续学习、元连续学习、元持续学习和连续学习方法都失败。我们提议将流行的MAML算法的在线扩展作为这一情景的有力基线。我们从经验上表明,持续数学模型比上述方法更适合新的情景,以及标准的持续学习和元学习方法。

相关内容

Source: Apple - iOS 8