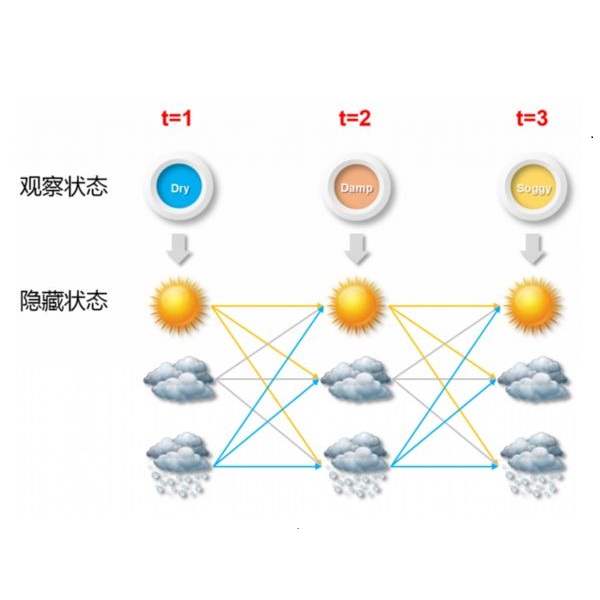

We study the frontier between learnable and unlearnable hidden Markov models (HMMs). HMMs are flexible tools for clustering dependent data coming from unknown populations. The model parameters are known to be identifiable as soon as the clusters are distinct and the hidden chain is ergodic with a full rank transition matrix. In the limit as any one of these conditions fails, it becomes impossible to identify parameters. For a chain with two hidden states we prove nonasymptotic minimax upper and lower bounds, matching up to constants, which exhibit thresholds at which the parameters become learnable.

翻译:我们研究了可学习和不可忽略的隐蔽的Markov模型(MMs)之间的边框。 HMM是将来自未知人群的依附数据分组的灵活工具。当群集相互区别,隐藏链链与全级过渡矩阵发生性关系时,模型参数就会立即被识别。在其中任何一个条件都失败的极限中,无法确定参数。对于两个隐藏状态的链条,我们证明它们与常数相匹配,符合常数,显示参数可以学习的阈值。