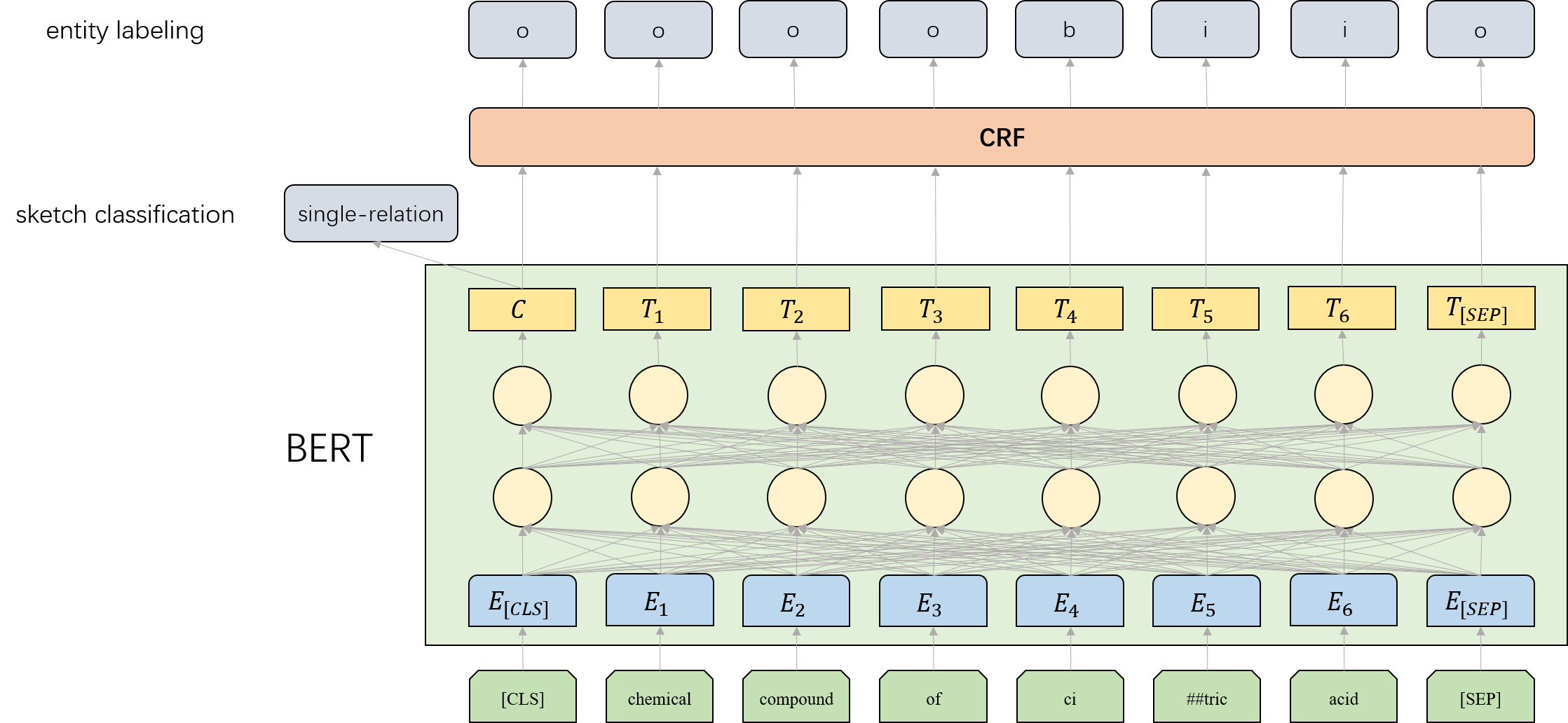

This paper presents our semantic parsing system for the evaluation task of open domain semantic parsing in NLPCC 2019. Many previous works formulate semantic parsing as a sequence-to-sequence(seq2seq) problem. Instead, we treat the task as a sketch-based problem in a coarse-to-fine(coarse2fine) fashion. The sketch is a high-level structure of the logical form exclusive of low-level details such as entities and predicates. In this way, we are able to optimize each part individually. Specifically, we decompose the process into three stages: the sketch classification determines the high-level structure while the entity labeling and the matching network fill in missing details. Moreover, we adopt the seq2seq method to evaluate logical form candidates from an overall perspective. The co-occurrence relationship between predicates and entities contribute to the reranking as well. Our submitted system achieves the exactly matching accuracy of 82.53% on full test set and 47.83% on hard test subset, which is the 3rd place in NLPCC 2019 Shared Task 2. After optimizations for parameters, network structure and sampling, the accuracy reaches 84.47% on full test set and 63.08% on hard test subset(Our code and data are available at https://github.com/zechagl/NLPCC2019-Semantic-Parsing).

翻译:本文展示了我们用于 NLPCC 2019 中公开域内语义解析评估任务的语义解析系统。 许多先前的作品将语义解解析作为序列到序列的问题。 相反, 我们用粗略到直线( coarse2fine) 的方式将此任务作为草图问题处理。 草图是一个逻辑形式的高层次结构, 不包括实体和上游等低层次细节。 这样, 我们就能将每个部分都优化。 具体地说, 我们将这一过程分解为三个阶段: 素描分类决定高层次结构, 而实体标签和匹配网络则以缺失的细节填充。 此外, 我们采用后传方法从整体角度来评估逻辑形式的候选人。 上游和实体之间的共见关系也有助于重新排序。 我们提交的系统在完整测试集( 82.53%) 和硬测试子集( 硬测试集为 NLPCC 第三位) 。 在2019 的硬测试网络结构中, 高级测试系统在 2019 的精确度中, 和 常规测试组中, 的精确度为2019 的常规 。