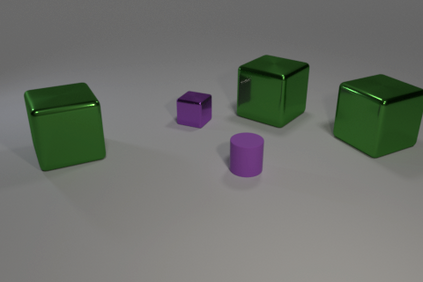

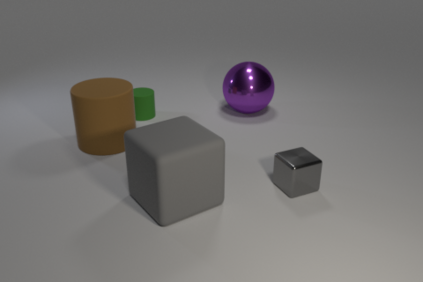

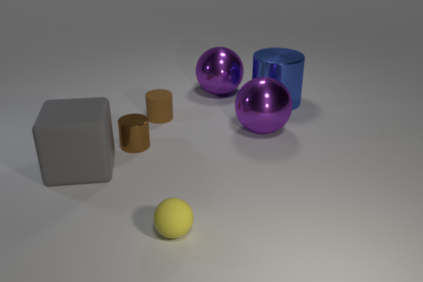

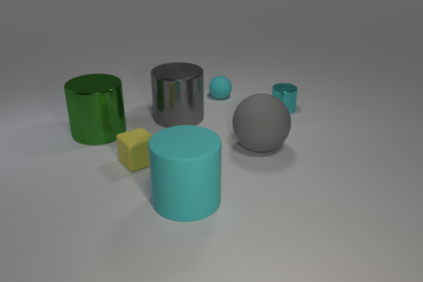

We marry two powerful ideas: deep representation learning for visual recognition and language understanding, and symbolic program execution for reasoning. Our neural-symbolic visual question answering (NS-VQA) system first recovers a structural scene representation from the image and a program trace from the question. It then executes the program on the scene representation to obtain an answer. Incorporating symbolic structure as prior knowledge offers three unique advantages. First, executing programs on a symbolic space is more robust to long program traces; our model can solve complex reasoning tasks better, achieving an accuracy of 99.8% on the CLEVR dataset. Second, the model is more data- and memory-efficient: it performs well after learning on a small number of training data; it can also encode an image into a compact representation, requiring less storage than existing methods for offline question answering. Third, symbolic program execution offers full transparency to the reasoning process; we are thus able to interpret and diagnose each execution step.

翻译:我们结合了两个强有力的理念:为视觉识别和语言理解进行深层代表性学习,以及为推理而执行象征性的方案。我们的神经-声波直观回答(NS-VQA)系统首先从图像中恢复结构性场景表达,然后从问题中找到一个跟踪程序。然后,在现场演示中执行程序以获得答案。将象征性结构作为先前的知识提供了三个独特的优势。首先,在象征性空间上执行方案对长期程序痕迹更为有力;我们的模型可以更好地解决复杂的推理任务,在CLEVR数据集中实现99.8%的精确度。第二,模型的数据和记忆效率更高:它在学习少量培训数据后表现良好;它还可以将图像编码成一个缩略图,比现有的离线问题解答方法要少一些存储。第三,象征性程序执行为推理过程提供了充分的透明度;因此,我们能够解释和诊断每个执行步骤。