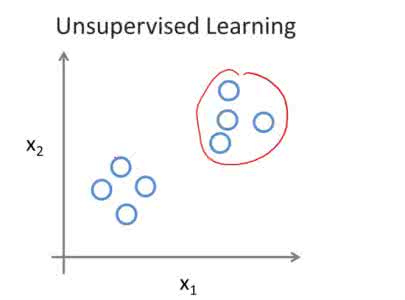

Integrating sensory inputs with prior beliefs from past experiences in unsupervised learning is a common and fundamental characteristic of brain or artificial neural computation. However, a quantitative role of prior knowledge in unsupervised learning remains unclear, prohibiting a scientific understanding of unsupervised learning. Here, we propose a statistical physics model of unsupervised learning with prior knowledge, revealing that the sensory inputs drive a series of continuous phase transitions related to spontaneous intrinsic-symmetry breaking. The intrinsic symmetry includes both reverse symmetry and permutation symmetry, commonly observed in most artificial neural networks. Compared to the prior-free scenario, the prior reduces more strongly the minimal data size triggering the reverse symmetry breaking transition, and moreover, the prior merges, rather than separates, permutation symmetry breaking phases. We claim that the prior can be learned from data samples, which in physics corresponds to a two-parameter Nishimori plane constraint. This work thus reveals mechanisms about the influence of the prior on unsupervised learning.

翻译:在未经监督的学习过程中,将感官投入与先前的信念结合到过去的经验中,这是大脑或人工神经计算的一个常见的基本特征。然而,在未经监督的学习中,先前的知识在数量上的作用仍然不明确,禁止对未经监督的学习进行科学了解。在这里,我们提议了一个未经监督的学习与先前的知识进行科学了解的统计物理模型,表明感官投入驱动了一系列与自发的内在对称性断裂有关的连续阶段过渡。内在的对称包括反对称和对称性对称性对称性,这在大多数人工神经网络中是常见的。与之前的无监督性神经网络相比,先前的对称性更有力地降低了触发反对称性断裂转变的最小数据规模,此外,先前的合并而不是分离性对称性断裂阶段。我们声称,从数据样本中可以学到以前的对齐尼希莫里平地平地平方平方块制约的两度数据。这项工作揭示了先前对非监督性学习的影响机制。