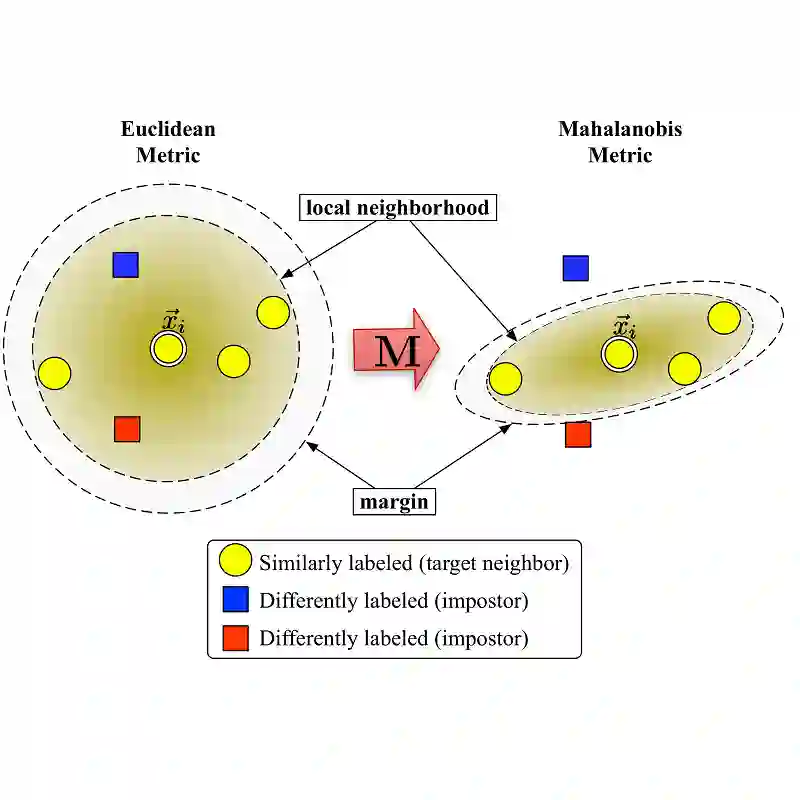

Similarity/Distance measures play a key role in many machine learning, pattern recognition, and data mining algorithms, which leads to the emergence of metric learning field. Many metric learning algorithms learn a global distance function from data that satisfy the constraints of the problem. However, in many real-world datasets that the discrimination power of features varies in the different regions of input space, a global metric is often unable to capture the complexity of the task. To address this challenge, local metric learning methods are proposed that learn multiple metrics across the different regions of input space. Some advantages of these methods are high flexibility and the ability to learn a nonlinear mapping but typically achieves at the expense of higher time requirement and overfitting problem. To overcome these challenges, this research presents an online multiple metric learning framework. Each metric in the proposed framework is composed of a global and a local component learned simultaneously. Adding a global component to a local metric efficiently reduce the problem of overfitting. The proposed framework is also scalable with both sample size and the dimension of input data. To the best of our knowledge, this is the first local online similarity/distance learning framework based on PA (Passive/Aggressive). In addition, for scalability with the dimension of input data, DRP (Dual Random Projection) is extended for local online learning in the present work. It enables our methods to be run efficiently on high-dimensional datasets, while maintains their predictive performance. The proposed framework provides a straightforward local extension to any global online similarity/distance learning algorithm based on PA.

翻译:在许多机器学习、模式识别和数据挖掘算法中,相似性/差异度度措施在很多机器学习、模式识别和数据挖掘算法中发挥着关键作用,这些算法导致出现计量学习领域。许多计量学习算法从满足问题制约的数据中学习全球距离功能。然而,在许多真实世界的数据集中,不同输入空间不同区域的特征的差别力量各不相同,因此全球计量往往无法捕捉任务的复杂性。为了应对这一挑战,建议采用地方计量方法,在不同输入空间区域学习多种指标。这些方法的一些优点是高度灵活性和学习非线性绘图的能力,但通常以更高的时间要求和过度适应问题为代价。为克服这些挑战,本研究提出了在线多度学习框架。拟议框架中的每一项指标都由同时学习的全球和当地部分组成。在本地计量中增加一个全球性要素,从而有效地减少超标问题。拟议的框架也与输入空间的抽样规模和层面相适应。我们的最佳知识是第一个本地在线类似性/远距性绘图框架,在PA(缩/A)项目当前数据学习的可持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性数据、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性、持续性能、持续性、持续性能、持续性、持续性、持续性能、持续性、持续性、持续性、持续性、持续性能、持续性、持续性、持续性、持续性能、持续性能、持续性能性能、性能、性能、持续性能性能性能、持续性能性能。