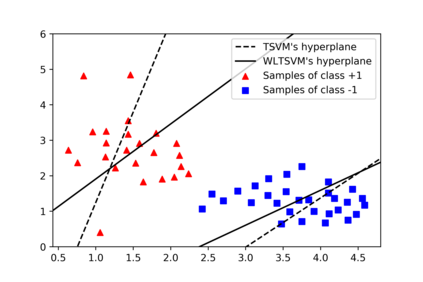

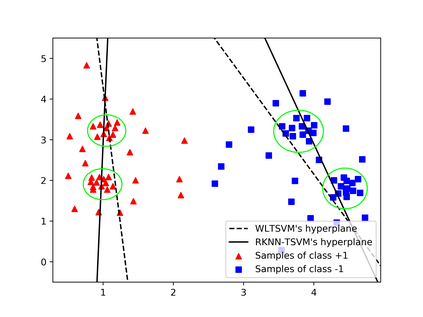

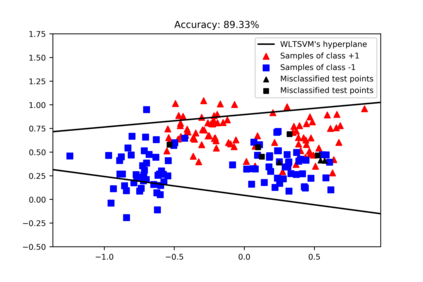

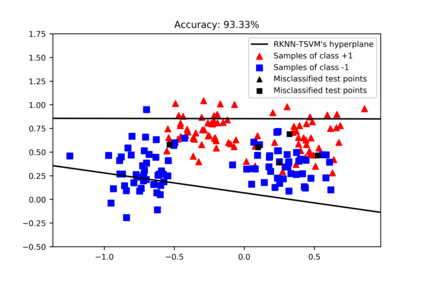

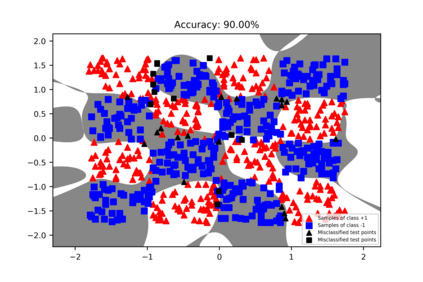

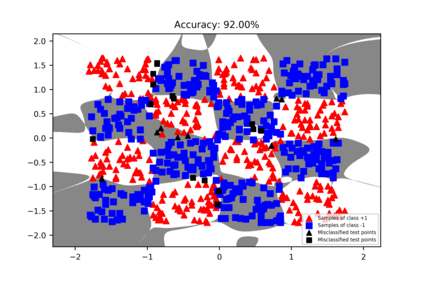

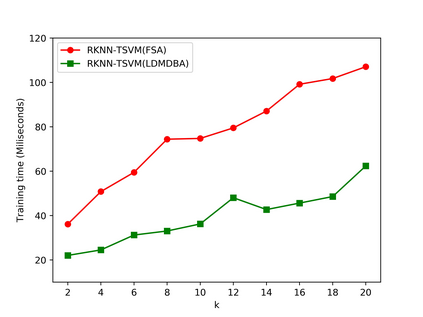

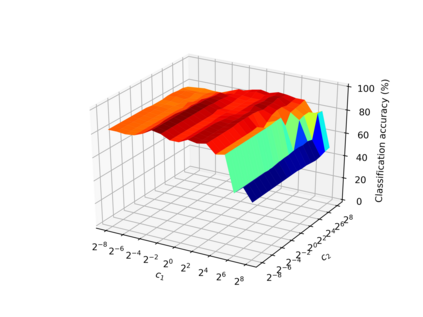

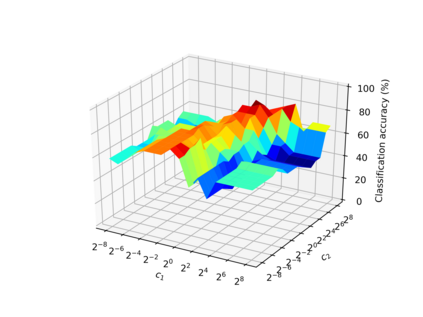

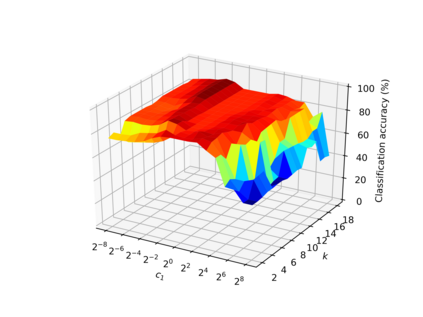

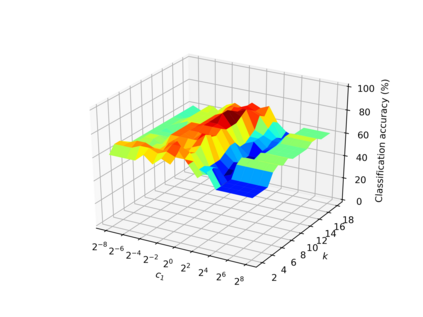

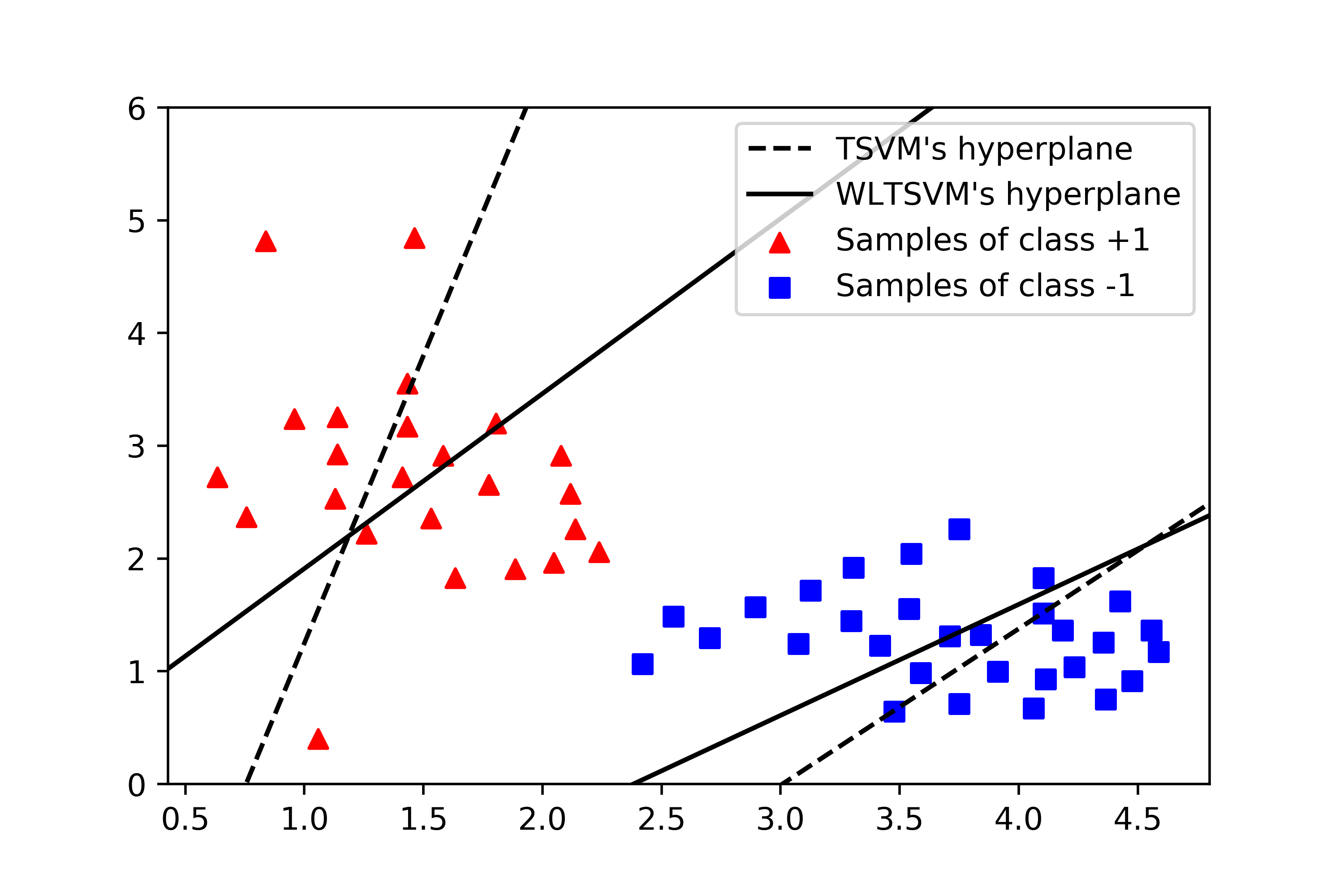

Among the extensions of twin support vector machine (TSVM), some scholars have utilized K-nearest neighbor (KNN) graph to enhance TSVM's classification accuracy. However, these KNN-based TSVM classifiers have two major issues such as high computational cost and overfitting. In order to address these issues, this paper presents an enhanced regularized K-nearest neighbor based twin support vector machine (RKNN-TSVM). It has three additional advantages: (1) Weight is given to each sample by considering the distance from its nearest neighbors. This further reduces the effect of noise and outliers on the output model. (2) An extra stabilizer term was added to each objective function. As a result, the learning rules of the proposed method are stable. (3) To reduce the computational cost of finding KNNs for all the samples, location difference of multiple distances based k-nearest neighbors algorithm (LDMDBA) was embedded into the learning process of the proposed method. The extensive experimental results on several synthetic and benchmark datasets show the effectiveness of our proposed RKNN-TSVM in both classification accuracy and computational time. Moreover, the largest speedup in the proposed method reaches to 14 times.

翻译:在双辅助矢量机(TSVM)的扩展中,一些学者利用K-近邻相邻(KNNN)图来提高TSVM的分类准确性,然而,这些基于KNN的TSVM分类器有两个主要问题,如计算成本高和超装。为了解决这些问题,本文件介绍了一个强化的基于K-近邻的基于K-近邻双辅助矢量机(RKNN-TSVM)的正规化的双向矢量机(RKNNN-TSVM)的扩展。它具有另外三个优点:(1) 通过考虑距离与最近的邻居的距离,给每个样本带来重量。这进一步降低了噪音和异端器对输出模型的影响。(2) 在每个目标功能中增加了一个额外的稳定值术语。因此,拟议方法的学习规则是稳定的。(3) 为了减少所有样品找到 KNNS的计算成本,基于K-近邻的多距离算法(LDMDBA)的定位差异已嵌入了拟议方法的学习过程。若干合成和基准数据集的广泛实验结果显示我们提议的RKNN-TSVM在分类精确和计算中达到14时间的最大速度。

相关内容

- Today (iOS and OS X): widgets for the Today view of Notification Center

- Share (iOS and OS X): post content to web services or share content with others

- Actions (iOS and OS X): app extensions to view or manipulate inside another app

- Photo Editing (iOS): edit a photo or video in Apple's Photos app with extensions from a third-party apps

- Finder Sync (OS X): remote file storage in the Finder with support for Finder content annotation

- Storage Provider (iOS): an interface between files inside an app and other apps on a user's device

- Custom Keyboard (iOS): system-wide alternative keyboards

Source: iOS 8 Extensions: Apple’s Plan for a Powerful App Ecosystem