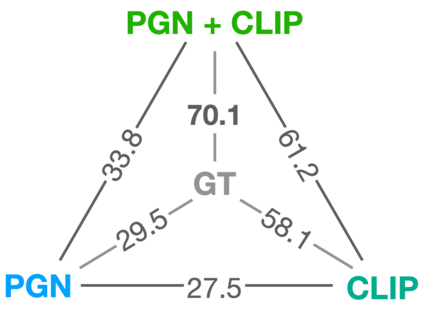

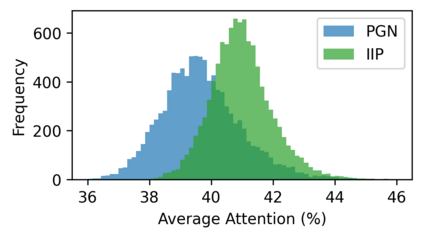

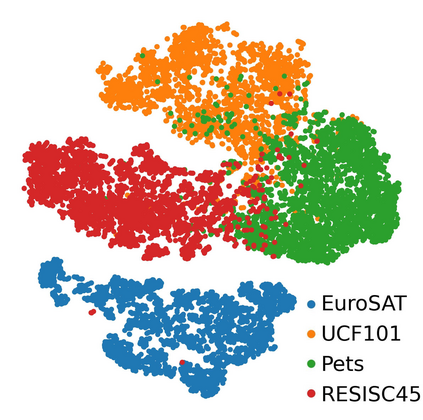

With the introduction of the transformer architecture in computer vision, increasing model scale has been demonstrated as a clear path to achieving performance and robustness gains. However, with model parameter counts reaching the billions, classical finetuning approaches are becoming increasingly limiting and even unfeasible when models become hosted as inference APIs, as in NLP. To this end, visual prompt learning, whereby a model is adapted by learning additional inputs, has emerged as a potential solution for adapting frozen and cloud-hosted models: During inference, this neither requires access to the internals of models' forward pass function, nor requires any post-processing. In this work, we propose the Prompt Generation Network (PGN) that generates high performing, input-dependent prompts by sampling from an end-to-end learned library of tokens. We further introduce the "prompt inversion" trick, with which PGNs can be efficiently trained in a latent space but deployed as strictly input-only prompts for inference. We show the PGN is effective in adapting pre-trained models to various new datasets: It surpasses previous methods by a large margin on 12/12 datasets and even outperforms full-finetuning on 5/12, while requiring 100x less parameters.

翻译:随着Transformer架构在计算机视觉领域的引入,增加模型规模已被证明是实现性能和鲁棒性提升的明确途径。然而,随着模型参数数量达到数十亿,经典的微调方法在模型成为推理API(如NLP)的情况下变得越来越有限,甚至无法实现。为此,视觉提示学习被提出作为一种潜在的解决方案,用于自适应冻结的且云主机模型,即在推理期间,这既不需要访问模型前向传递函数的内部,也不需要任何后处理。在这项工作中,我们提出了Prompt生成网络(PGN),它通过从端到端学习的令牌库中进行采样,生成高性能的、依赖于输入的提示。我们进一步引入了“提示反演”技巧,采用这种技巧可以在潜空间中高效地训练PGN,但在推理时仅部署为严格的输入-输出提示。我们展示了PGN在适应预训练模型到各种新数据集方面的效果:它在12/12数据集上超过了以前的方法,甚至在5/12上优于完整微调,同时需要100倍少的参数。