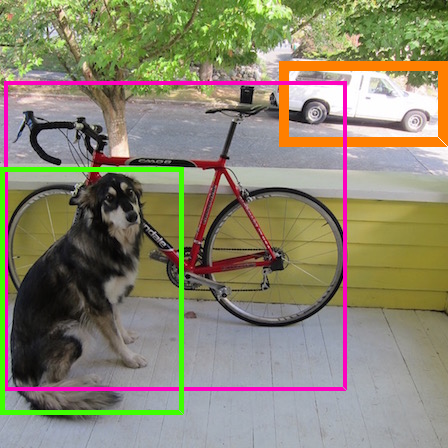

It is known that deep neural networks (DNNs) could be vulnerable to adversarial attacks. The so-called physical adversarial examples deceive DNN-based decision makers by attaching adversarial patches to real objects. However, most of the existing works on physical adversarial attacks focus on static objects such as glass frame, stop sign and image attached to a cardboard. In this work, we proposed adversarial T-shirt, a robust physical adversarial example for evading person detectors even if it suffers from deformation due toa moving person's pose change. To the best of our knowledge, the effect of deformation is first modeled for designing physical adversarial examples with respect to non-rigid objects such as T-shirts. We show that the proposed method achieves 79% and 63% attack success rates in digital and physical worlds respectively against YOLOv2. In contrast, the state-of-the-art physical attack method to fool a person detector only achieves 27% attack success rate. Furthermore, by leveraging min-max optimization, we extend our method to the ensemble attack setting against object detectors YOLOv2 and Faster R-CNN simultaneously.

翻译:众所周知,深神经网络(DNN)可能容易受到对抗性攻击。所谓的身体对抗性例子通过将对抗性补丁附在真实物体上,欺骗了DNN的决策者。然而,关于身体对抗性攻击的现有工作大多侧重于静态物体,如玻璃框、停止标志和纸板上的图像。在这项工作中,我们提议了对抗性T恤衫,这是躲避人探测器的强健的人身对抗性例子,即使它由于移动人的姿势变化而畸形。据我们所知,变形的效果首先以设计非硬性物体(如T恤衫)的物理对抗性例子为模型。我们表明,拟议的方法在数字和物理世界分别对YOLOv2 达到79%和63%的攻击成功率。相比之下,用最先进的实际攻击方法来愚弄一个人探测器只达到27%的攻击成功率。此外,我们利用微轴优化,将我们的方法推广到对物体探测器(YOLOv2)和R-CN)同时设定的感官攻击率。