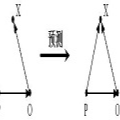

Inductive knowledge graph completion requires models to comprehend the underlying semantics and logic patterns of relations. With the advance of pretrained language models, recent research have designed transformers for link prediction tasks. However, empirical studies show that linearizing triples affects the learning of relational patterns, such as inversion and symmetry. In this paper, we propose Bi-Link, a contrastive learning framework with probabilistic syntax prompts for link predictions. Using grammatical knowledge of BERT, we efficiently search for relational prompts according to learnt syntactical patterns that generalize to large knowledge graphs. To better express symmetric relations, we design a symmetric link prediction model, establishing bidirectional linking between forward prediction and backward prediction. This bidirectional linking accommodates flexible self-ensemble strategies at test time. In our experiments, Bi-Link outperforms recent baselines on link prediction datasets (WN18RR, FB15K-237, and Wikidata5M). Furthermore, we construct Zeshel-Ind as an in-domain inductive entity linking the environment to evaluate Bi-Link. The experimental results demonstrate that our method yields robust representations which can generalize under domain shift.

翻译:入门知识图的完成要求各种模型来理解基本的语义和逻辑关系模式。随着预先培训的语言模型的推进,最近的研究已经设计了连接预测任务的变压器。然而,经验研究表明,线性三重影响对正和对称等关系模式的学习。在本文件中,我们提出Bi-Link,这是一个对比式学习框架,具有概率性合成语法提示,可以进行连接预测。我们利用BERT的语法知识,根据学习的通用知识图表的合成方法,有效地寻找关系提示。为了更好地表达对称关系,我们设计了一种对称联系预测模型,在前向预测和后向预测之间建立双向联系。这个双向联系包含试验时的灵活自共性战略。在我们的实验中,Bi-Link超越了连接预测数据集的最新基线(WN18RR、FB15K-237和Wikidata5M)。此外,我们将Zesheel-Ind 构建了一个对称连接模型的对称链接模型模型模型模型模型,可以用来将我们一般的磁场下演算。