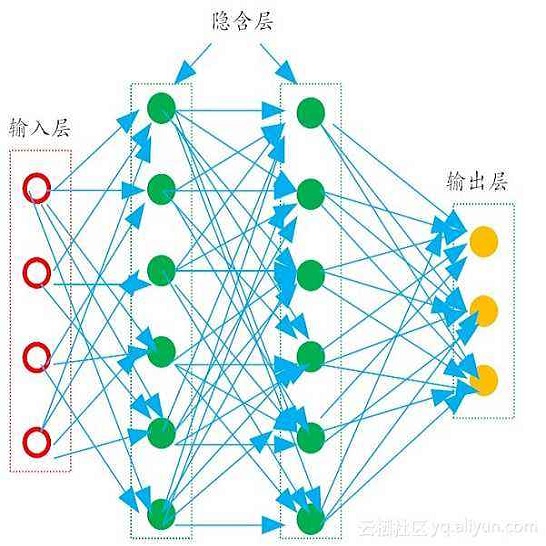

The Backpropagation algorithm relies on the abstraction of using a neural model that gets rid of the notion of time, since the input is mapped instantaneously to the output. In this paper, we claim that this abstraction of ignoring time, along with the abrupt input changes that occur when feeding the training set, are in fact the reasons why, in some papers, Backprop biological plausibility is regarded as an arguable issue. We show that as soon as a deep feedforward network operates with neurons with time-delayed response, the backprop weight update turns out to be the basic equation of a biologically plausible diffusion process based on forward-backward waves. We also show that such a process very well approximates the gradient for inputs that are not too fast with respect to the depth of the network. These remarks somewhat disclose the diffusion process behind the backprop equation and leads us to interpret the corresponding algorithm as a degeneration of a more general diffusion process that takes place also in neural networks with cyclic connections.

翻译:后向偏移算法依赖于使用消除时间概念的神经模型的抽象性, 因为输入是瞬间映射到输出的。 在本文中, 我们声称, 这种忽略时间的抽象性, 加上输入训练集时突然发生的输入变化, 实际上是为什么在一些论文中, 后向生物的可信度被视为一个可论证的问题。 我们显示, 一旦一个深向前向网络与有时间延迟反应的神经元一起运行, 后向偏重的重量更新就会成为基于前向后波的生物貌似扩散进程的基本方程式。 我们还表明, 这种过程非常接近输入的梯度, 与网络的深度相比并不快。 这些评论多少揭示了反向方程式背后的传播过程, 并导致我们将相应的算法解释为一个更普遍的传播过程的分化过程, 这一过程也发生在有循环连接的神经网络中。