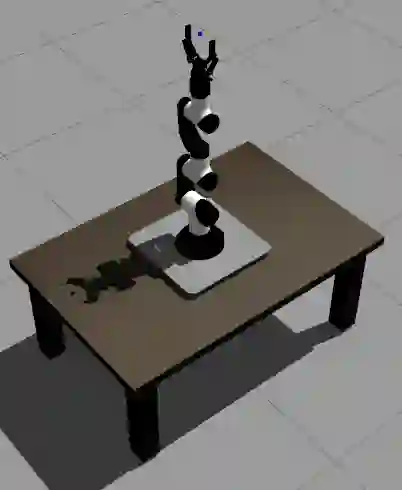

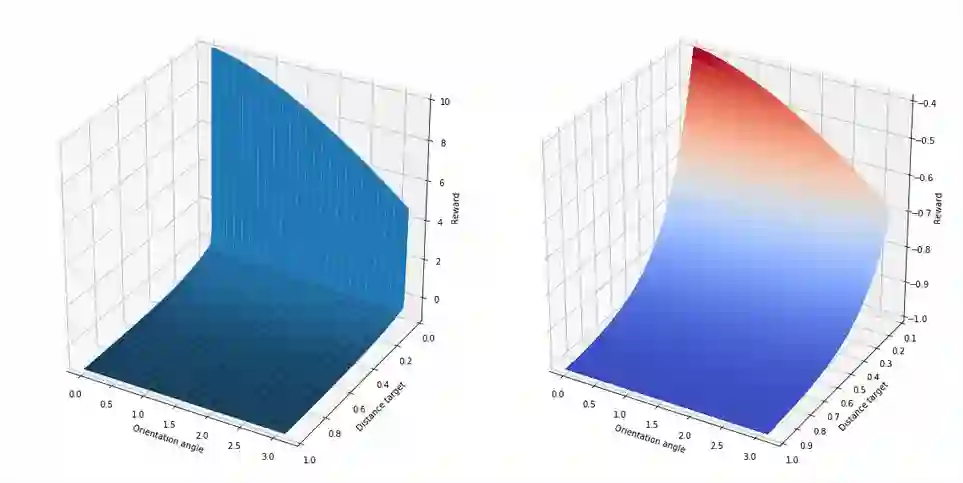

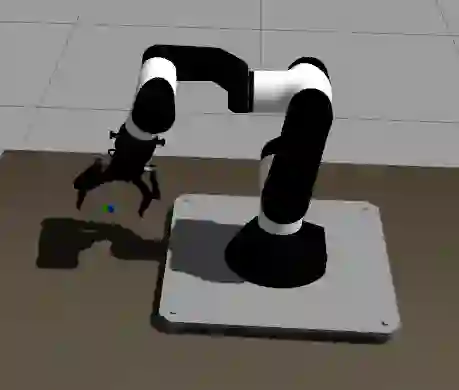

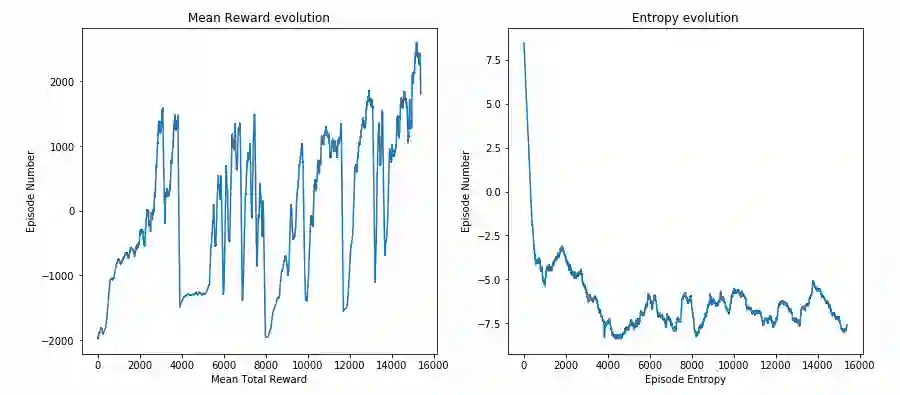

This paper presents an upgraded, real world application oriented version of gym-gazebo, the Robot Operating System (ROS) and Gazebo based Reinforcement Learning (RL) toolkit, which complies with OpenAI Gym. The content discusses the new ROS 2 based software architecture and summarizes the results obtained using Proximal Policy Optimization (PPO). Ultimately, the output of this work presents a benchmarking system for robotics that allows different techniques and algorithms to be compared using the same virtual conditions. We have evaluated environments with different levels of complexity of the Modular Articulated Robotic Arm (MARA), reaching accuracies in the millimeter scale. The converged results show the feasibility and usefulness of the gym-gazebo 2 toolkit, its potential and applicability in industrial use cases, using modular robots.

翻译:本文介绍了一个升级的、真实世界应用型健身房健身房、机器人操作系统(ROS)和基于Gazebo的强化学习(RL)工具包,该工具包符合OpenAI Gym。内容讨论了基于ROS 2的新软件结构,并总结了使用Proximal政策优化(PPO)获得的结果。最终,这项工作的产出为机器人提供了一个基准系统,允许使用同样的虚拟条件比较不同的技术和算法。我们评估了不同复杂程度的机动管控机器人(MARA)环境,达到了毫米尺寸的精密度。聚合结果显示健身房2工具包的可行性和有用性,及其在使用模块机器人的工业应用案例中的潜力和适用性。